- cross-posted to:

- technology@beehaw.org

- cross-posted to:

- technology@beehaw.org

Instagram is profiting from several ads that invite people to create nonconsensual nude images with AI image generation apps, once again showing that some of the most harmful applications of AI tools are not hidden on the dark corners of the internet, but are actively promoted to users by social media companies unable or unwilling to enforce their policies about who can buy ads on their platforms.

While parent company Meta’s Ad Library, which archives ads on its platforms, who paid for them, and where and when they were posted, shows that the company has taken down several of these ads previously, many ads that explicitly invited users to create nudes and some ad buyers were up until I reached out to Meta for comment. Some of these ads were for the best known nonconsensual “undress” or “nudify” services on the internet.

Seen similar stuff on TikTok.

That’s the big problem with ad marketplaces and automation, the ads are rarely vetted by a human, you can just give them money, upload your ad and they’ll happily display it. They rely entirely on users to report them which most people don’t do because they’re ads and they wont take it down unless it’s really bad.

It’s especially bad on reels/shorts for pretty much all platforms. Tons of financial scams looking to steal personal info or worse. And I had one on a Facebook reel that was for boner pills that was legit a minute long ad of hardcore porn. Not just nudity but straight up uncensored fucking.

The user reports are reviewed by the same model that screened the ad up-front so it does jack shit

Actually, a good 99% of my reports end up in the video being taken down. Whether it’s because of mass reports or whether they actually review it is unclear.

What’s weird is the algorithm still seems to register that as engagement, so lately I’ve been reporting 20+ videos a day because it keeps showing them to me on my FYP. It’s wild.

That’s a clever way of getting people to work for them as moderators.

Okay this is going to be one of the amazingly good uses of the newer multimodal AI, it’ll be able to watch every submission and categorize them with a lot more nuance than older classifier systems.

We’re probably only a couple years away from seeing a system like that in production in most social media companies.

Nice pipe dream, but the current fundamental model of AI is not and cannot be made deterministic. Until that fundamental chamge is developed, it isnt possible.

the current fundamental model of AI is not and cannot be made deterministic.

I have to constantly remind people about this very simple fact of AI modeling right now. Keep up the good work!

What do you mean? AI absolutely can be made deterministic. Do you have a source to back up your claim?

You know what’s not deterministic? Human content reviewers.

Besides, determinism isn’t important for utility. Even if AI classified an ad wrong 5% of the time, it’d still massively clean up the spammy advertisers. But they’re far, FAR more accurate than that.

https://www.sitation.com/non-determinism-in-ai-llm-output/

AI can be made deterministic, yes, absolutely.

The current design everyone is using(LLMs) cannot be made deterministic.

Again, you are wrong. Specifically ChatGPT may not be able to be deterministic since it’s a hosted service, but you absolutely can replay a prompt using the same random seed to get deterministic responses. Computer randomness isn’t truly random.

But if that’s not satisfying enough, you can also configure the temperature to be zero and system fingerprinting to always be the same, and that makes it even more deterministic, since it will always use the highest probability token.

For example, Llama can be fully deterministic. https://github.com/huggingface/transformers/issues/25507#issuecomment-1678498896

I love your wishful thinking. Too bad academia doesnt agree with you.

Edit: also, I have to come back to laugh at you for trying to argue that the almost random nature of software random number generators is deterministic AI.

Please enlighten me then. Clearly people are doing it, as proved by the link I sent. Are you simply going to ignore that? Perhaps we have different definitions of determinism.

It’s all so incredibly gross. Using “AI” to undress someone you know is extremely fucked up. Please don’t do that.

I’m going to undress Nobody. And give them sexy tentacles.

Behold my meaty, majestic tentacles. This better not awaken anything in me…

Same vein as “you should not mentally undress the girl you fancy”. It’s just a support for that. Not that i have used it.

Don’t just upload someone else’s image without consent, though. That’s even illegal in most of europe.

It remains fascinating to me how these apps are being responded to in society. I’d assume part of the point of seeing someone naked is to know what their bits look like, while these just extrapolate with averages (and likely, averages of glamor models). So we still dont know what these people actually look like naked.

And yet, people are still scorned and offended as if they were.

Technology is breaking our society, albeit in place where our culture was vulnerable to being broken.

Something like this could be career ending for me. Because of the way people react. “Oh did you see Mrs. Bee on the internet?” Would have to change my name and move three towns over or something. That’s not even considering the emotional damage of having people download you. Knowledge that “you” are dehumanized in this way. It almost takes the concept of consent and throws it completely out the window. We all know people have lewd thoughts from time to time, but I think having a metric on that…it would be so twisted for the self-image of the victim. A marketplace for intrusive thoughts where anyone can be commodified. Not even celebrities, just average individuals trying to mind their own business.

Exactly. I’m not even shy, my boobs have been out plenty and I’ve sent nudes all that. Hell I met my wife with my tits out. But there’s a wild difference between pictures I made and released of my own will in certain contexts and situations vs pictures attempting to approximate my naked body generated without my knowledge or permission because someone had a whim.

I think you might be overreacting, and if you’re not, then it says much more about the society we are currently living in than this particular problem.

I’m not promoting AI fakes, just to be clear. That said, AI is just making fakes easier. If you were a teacher (for example) and you’re so concerned that a student of yours could create this image that would cause you to pick up and move your life, I’m sad to say they can already do this and they’ve been able to for the last 10 years.

I’m not saying it’s good that a fake, or an unsubstantiated rumor of an affair, etc can have such big impacts on our life, but it is troubling that someone like yourself can fear for their livelihood over something so easy for anyone to produce. Are we so fragile? Should we not worry more about why our society is so prudish and ostracizing to basic human sexuality?

None of that is relevant. The issue being discussed here isn’t one of whether or not it’s currently possible to create fake nudes.

The original post being replied to indicated that, since AI, an artist, a photoshopper, whatever, is just creating an imaginary set of genitalia, and they have no ability to know if it’s accurate or not, there is no damage being done. That’s what people are arguing about.

The society we are living in can be handling things incorrectly but it can absolutely have real-world damaging effects. As a collective we should worry about our society, but individuals absolutely are and should be justified in worrying about their lives being damaged by this.

I think this is why it’s going to be interesting to see how we navigate this as a society. So far, we’ve done horribly. It’s been over a century now that we’ve acknowledged sexual harassment in the workplace is a problem that harms workers (and reduces productivity) and yet it remains an issue today (only now we know the human resources department will protect the corporate image and upper management by trying to silence the victims).

What deepfakes and generative AI does is make it easy for a campaign staffer, or an ambitious corporate later climber with a buddy with knowhow, or even a determined grade-school student to create convincing media and publish it on the internet. As I note in the other response, if a teen’s sexts get reported to law enforcement, they’ll gladly turn it into a CSA production and distribution issue and charge the teens themselves with serious felonies with long prison sentences. Now imagine if some kid wanted to make a rival disappear. Heck, imagine the smart kid wanting to exact revenge on a social media bully, now equipped with the power of generative AI.

The thing is, the tech is out of the bag, and as with princes in the mid-east looking at cloned sheep (with deteriorating genetic defects) looking to create a clone of himself as an heir, humankind will use tech in the worst, most heinous possible ways until we find cause to cease doing so. (And no, judicial punishment doesn’t stop anyone). So this is going to change society, whether we decide collectively that sexuality (even kinky sexuality) is not grounds to shame and scorn someone, or that we use media scandals the way Italian monastics and Russian oligarchs use poisons, and scandalize each other like it’s the shootout at O.K. Corral.

Thanks, I liked this reply. There is a lot of nuance here.

Wtf are you even talking about? People should have the right to control if they are “approximated” as nude. You can wax poetic how it’s not nessecarily correct but that’s because you are ignoring the woman who did not consent to the process. Like, if I posted a nude then that’s on the internet forever. But now, any picture at all can be made nude and posted to the internet forever. You’re entirely removing consent from the equation you ass.

I’m not arguing whether people should or should not have control over whether others can produce a nude (or lewd) likeness or perpetuate false scandal, only that this technology doesn’t change the equation. People have been accused of debauchery and scorned long before the invention of the camera, let alone digital editing.

Julia the Elder was portrayed (poorly, mind you) in sexual congress on Roman graffiti. Marie Antoinette was accused of a number of debauched sexual acts she didn’t fully comprehend. Marie Antoinette actually had an uninteresting sex life. It was accusations of The German Vice (id est lesbianism) that were the most believable and quickened her path to the guillotine.

The movie, The Contender (2000) addresses the issue with happenstance evidence. A woman politician was caught on video inflagrante delicto at a frat party in her college years just as she was about to be appointed as a replacement Vice President.

Law enforcement still regards sexts between underage teens as child porn, and our legal system will gladly incarcerate those teens for the crime of expressing their intimacy to their lovers. (Maine, I believe, is the sole exception, having finally passed laws to let teens use picture messaging to court each other.) So when it comes to the intersection of human sexuality and technology, so far we suck at navigating it.

To be fair, when it comes to human sexuality at all, US society sucks at navigating it. We still don’t discuss consent in grade school. I can’t speak for anywhere else in the world, though I’ve not heard much good news.

The conversation about revenge porn (which has been made illegal without the consent of all participants in the US) appears to inform how society regards explicit content of private citizens. I can’t speak to paparazzi content. Law hasn’t quite caught up with Photoshop, let alone deepfakes and content made with generative AI systems.

But my point was, public life, whether in media, political, athletic or otherwise, is competitive and involves rivalries that get dirty. Again, if we, as a species actually had the capacity for reason, we would be able to choose our cause célèbre with rationality, and not judge someone because some teenager prompted a genAI platform to create a convincing scandalous video.

I think we should be above that, as a society, but we aren’t. My point was that I don’t fully understand the mechanism by which our society holds contempt for others due to circumstances outside their control, a social behavior I find more abhorrent than using tech to create a fictional image of someone in the buff for private use.

Sadly, fictitious explicit media can be as effective as a character assassination tool as the real thing. I think it should be otherwise. I think we should be better than that, but we’re not. I am, consequently frustrated and disappointed with my society and my species. And while I think we’re going to need to be more mature about it, I’ve opined this since high school in the 1980s and things have only gotten worse.

At the same time, it’s like the FGC-9, the tech cannot be contained any than we can stop software piracy with DRM. Nor can we trust the community at large to use it responsibly. So yes, you can expect explicit media of colleagues to fly much the way accusations of child sexual assault flew in the 1990s (often without evidence in middle and upper management. It didn’t matter.) And we may navigate it pretty much the same way, with the same high rate of career casualties.

An artist doesn’t need your consent to paint/ draw you. A photographer doesn’t need your consent if your in public. You likely posted your original picture in public (yay facebook). Unfortunately consent was never a concern here… and you likely gave it anyway.

Are you seriously saying that since I am walking in public I am giving concent to photos taken of me and turned nude?

You’ve lost your damn mind.

Nope. Quite the opposite in that consent is not required.

Edit: You have no right to restrict someone else from taking photos and videos while in public. Period. Their purpose and use doesn’t matter (commercial usage can be limited to some extents). https://lifehacker.com/know-your-rights-photography-in-public-5912250

There are limits regarding the right to take pictures in public. Instances of creepshot photographers have raised issues of good faith. For-purpose media (a street scene in the news, for instance, requires that any foreground person must have consent, or must be censored out.

So, dependjng on your state and county (or nation) it may be a crime to take pictures of someone else with an intent to use them as a foreground element without their consent (explicit or otherwise).

There are limits regarding the right to take pictures in public.

This is why I said this…

commercial usage can be limited to some extents

But let’s look at it this way…

https://www.earthcam.com/world/ireland/dublin/?cam=templebar

https://www.earthcam.com/usa/florida/lauderdalebythesea/town/?cam=lbts_beach

https://www.earthcam.com/usa/florida/naples/?cam=naplespier

https://www.earthcam.com/world/southkorea/seoul/songridangil/?cam=songridan_gil

https://www.earthcam.com/world/israel/jerusalem/?cam=jerusalemSo why is nothing on this site blurred? If there are “limits” why is there literally cameras being streamed of public places that can have faces in the foreground pretty clearly without consent. EVEN CHILDREN! WHO WILL THINK OF THE CHILDREN! (/s) Earthcam makes money doing this…

How about literally every company with a security camera?

Instances of creepshot photographers have raised issues of good faith.

This is never litigated under the issue of pictures in public. This is always done under stalking/harassment laws. None of them are ever just “He took a picture that I’m in”.

How about if I buy stock photos and feed that into the AI system. Does that count since they didn’t intend for that to be it’s use? “Creepy” and “morally wrong” isn’t necessarily illegal. The concern isn’t the public photography and actually ownership of the photo belongs to the person who takes the photos not the subjects in the photo. So yes, you don’t particularly have much recourse unless you can prove damages that falls under some other law. Case and point Paparazzi… I mean there’s literally been lawsuits where the settlement was in favor of the photographer AGAINST the subject https://sports-entertainment.brooklaw.edu/media/a-new-type-of-internet-troll-how-paparazzi-use-copyright-law-to-cash-out-on-celebritys-instagram-posts/ She used a photo on her insta from that paparazzi, the paparazzi sued, settlement was reached and the photo was removed. She didn’t have license to use that photo, even though it’s her in the picture. You can find this shit literally everywhere. We’ve already litigated this to death. Now we all think that paparazzi are generally scum… but that doesn’t make it illegal.

What is new here is does an AI generated thing count as something special on it’s own in a legal perspective. The act of obtaining pictures while in public is not really a debate even if they were obtained to create a derivative work. I fall on the side of the AI generated thing being fair use. It’s a transformation of the original work and doesn’t violate your actual privacy (certainly not any more than taking pictures of a nude beach). IMO any other stance would negate so much other shit that we all rely on (meme-culture specifically) that it’s hypocritical to hold any other stance. Do I like that… Not really, but it doesn’t make sense otherwise.

The draw to these apps is that the user can exploit anyone they want. It’s not really about sex, it’s about power.

Human society is about power. It is because we can’t get past dominance hierarchy that our communities do nothing about schoolyard bullies, or workplace sexual harassment. It is why abstinence-only sex-ed has nothing positive to say to victims of sexual assault, once they make it clear that used goods are used goods.

Our culture agrees by consensus that seeing a woman naked, whether a candid shot, caught inflagrante delicto or rendered from whole cloth by a generative AI system, redefines her as a sexual object, reducing her qualifications as a worker, official or future partner. That’s a lot of power to give to some guy with X-ray Specs, and it speaks poorly of how society regards women, or human beings in general.

We disregard sex workers, too.

Violence sucks, but without the social consensus the propagates sexual victimhood, it would just be violence. Sexual violence is extra awful because the rest of society actively participates in making it extra awful.

Dude I can imagine people naked in my head.

Yes I think this ai trend is sad and people who use these service, it says a lot about what kind of person they are. And it also says a lot about what kind of company meta is.

I suspect it’s more affecting for younger people who don’t really think about the fact that in reality, no one has seen them naked. Probably traumatizing for them and logic doesn’t really apply in this situation.

Does it really matter though? “Well you see, they didn’t actually see you naked, it was just a photorealistic approximation of what you would look like naked”.

At that point I feel like the lines get very blurry, it’s still going to be embarrassing as hell, and them not being “real” nudes is not a big comfort when having to confront the fact that there are people masturbating to your “fake” nudes without your consent.

I think in a few years this won’t really be a problem because by then these things will be so widespread that no one will care, but right now the people being specifically targeted by this must not be feeling great.

It depends very much on the individual apparently. I don’t have a huge data set but there are girls that I know that have had this has happened to them, and some of them have just laughed it off and really not seemed like they cared. But again they were in their mid twenties not 18 or 19.

How dare that other person i don’t know and will never meet gain sexual stimulation!

My body is not inherently for your sexual simulation. Downloading my picture does not give you the right to turn it in to porn.

You get to tell me what i can and cannot think about in my own head?

WTF?

There is a huge ass difference between your personal thoughts and using a subjects social media, a database of existing nudes and AI to have REAL MEDIA produced.

Seriously, not even remotely similar and its frankly disturbing that this is even your thought process.

If thought crime is a thing, im out.

That is crossing the Rubicon.

There is no harm done to you or your body with an AI generated image or video.

Blackmail and extortion are crimes of their own, as are rape and sexual assault.

But thinking about something and using tools to visualize it are not crimes.

Maybe society overreacts to nudity. Maybe society’s attitude to sex needs to change. Maybe opression and regulation of sex has been a major form of control over society and oppression of certain groups.

People are too concerned with their own junk to see the actual issue.

lmao

It’s not thoughtcrime you giant crybaby.

But thinking about something and using tools to visualize it are not crimes.

This is SUCH a huge leap. You have a right to your thoughts, not databases, programming and services to generate media.

you giant crybaby

Stay classy, fascist.

You want to limit what data people can collect and share what programs they can write.

Why stop there?

Prohibit what paintings they cam make. What drawings can be drawn. What words can be written.

Fascist.

Did you miss what this post is about? In this scenario it’s literally not your body.

There is nothing stopping anyone from using it on my body. Seriously, get a fucking grip.

Do you have nudes out there? Because if not then yes, that would stop people. The ai can’t magically reveal what’s actually under your clothes.

You’ve lost this argument when you don’t even know what the argument is about.

The problem isn’t what is actually under because only you or people you choose would know that, the problem is it appears like it’s what is actually under your clothes. What do you think people should do, say “That’s not what I actually look like naked, this is what I actually look like naked” or something?

Literally yes. As this becomes a more prevalent, widespread issue, eventually we’re going to reach a point where seeing a nude of someone is effectively meaningless, as it’s just as likely that it’s fake as it is real.

This is just a transitional phase. It’s going to be rough for sure, especially with how puritan and judgmental our culture is, but my point stands.

This is such a dumb argument. Nobody is claiming that the AI can show you what’s actually beneath a person’s clothes. The nudes being fake doesn’t resolve the ethical issue of creating porn of people who never agreed to it.

The people doing mental gymnastics about this stuff are just telling on themselves. Don’t make fake porn of real people, and if you do, be prepared to be rightfully treated as a sexual predator if anyone finds out.

Look at their next comment, that’s literally what they think is happening.

And it should resolve it. The idea of someone picturing us in their head, photoshopping us, or drawing us, can be incredibly creepy, yeah, but nobody has ever tried to make it illegal.

Also is this an argument of ethics or legality? They’re not inherently the same. Like, I think it’s unethical to insult random people in the street, but it sure as hell shouldn’t be illegal.

As for your last part, it’s funny because I’ve literally never done this. Ironically enough, I find it too creepy to even try, but in the same way that photoshopping or drawing someone nude would be. Incredibly creepy, but not illegal.

Scroll back up.

Undress any girl for free

Delete clothing

Entirely screw off with this gaslighting BS.

That’s their pitch, it’s their way of advertising to people. The ai isn’t literally psychic. All the ai is doing is guessing by using a database of thousands, tens of thousands, hundreds of thousands of naked bodies, and trying to fill in the blanks based on what it thinks yours probably looks like.

The AI isn’t magic, it doesn’t have the ability to somehow reveal what you look like without knowing. It’s the equivalent of really good photoshop effectively.

So we still dont know what these people actually look like naked.

I think the offense is in the use of their facial likeness far more than their body.

If you took a naked super-sized barbie doll and plastered Taylor Swift’s face on it, then presented it to an audience for the purpose of jerking off, the argument “that’s not what Taylor’s tits look like!” wouldn’t save you.

Technology is breaking our society

Unregulated advertisement combined with a clickbait model for online marketing is fueling this deluge of creepy shit. This isn’t simply a “Computers Evil!” situation. Its much more that a handful of bad actors are running Silicon Valley into the ground.

Not so much computers evil! as just acknowledging there will always be malicious actors who will find clever ways to use technology to cause harm. And yes, there’s a gathering of folk on 4Chan/b who nudify (denudify?) submitted pictures, usually of people they know, which, thanks to the process, puts them out on the internet. So this is already a problem.

Think of Murphy’s Law as it applies to product stress testing. Eventually, some customer is going to come in having broke the part you thought couldn’t be broken. Also, our vast capitalist society is fueled by people figuring out exploits in the system that haven’t been patched or criminalized (see the subprime mortgage crisis of 2008). So we have people actively looking to utilize technology in weird ways to monetize it. That folds neatly like paired gears into looking at how tech can cause harm.

As for people’s faces, one of the problems of facial recognition as a security tool (say when used by law enforcement to track perps) is the high number of false positives. It turns out we look a whole lot like each other. Though your doppleganger may be in another state and ten inches taller / shorter. In fact, an old (legal!) way of getting explicit shots of celebrities from the late 20th century was to find a look-alike and get them to pose for a song.

As for famous people, fake nudes have been a thing for a while, courtesy of Photoshop or some other digital photo-editing set combined with vast libraries of people. Deepfakes have been around since the late 2010s. So even if generative AI wasn’t there (which is still not great for video in motion) there are resources for fabricating content, either explicit or evidence of high crimes and misdemeanors.

This is why we are terrified of AI getting out of hand, not because our experts don’t know what they’re doing, but because the companies are very motivated to be the first to get it done, and that means making the kinds of mistakes that cause pipeline leakage on sacred Potawatomi tribal land.

This is why we are terrified of AI getting out of hand

I mean, I’m increasingly of the opinion that AI is smoke and mirrors. It doesn’t work and it isn’t going to cause some kind of Great Replacement any more than a 1970s Automat could eliminate the restaurant industry.

Its less the computers themselves and more the fear surrounding them that seem to keep people in line.

The current presumption that generative AI will replace workers is smoke and mirrors, though the response by upper management does show the degree to which they would love to replace their human workforce with machines, or replace their skilled workforce with menial laborers doing simpler (though more tedious) tasks.

If this is regarded as them tipping their hands, we might get regulations that serve the workers of those industries. If we’re lucky.

In the meantime, the pursuit of AGI is ongoing, and the LLMs and generative AI projects serve to show some of the tools we have.

It’s not even that we’ll necessarily know when it happens. It’s not like we can detect consciousness (or are even sure what consciousness / self awareness / sentience is). At some point, if we’re not careful, we’ll make a machine that can deceive and outthink its developers and has the capacity of hostility and aggression.

There’s also the scenario (suggested by Randall Munroe) that some ambitious oligarch or plutocrat gains control of a system that can manage an army of autonomous killer robots. Normally such people have to contend with a principal cabinet of people who don’t always agree with them. (Hitler and Stalin both had to argue with their generals.) An AI can proceed with a plan undisturbed by its inhumane implications.

I can see how increased integration and automation of various systems consolidates power in fewer and fewer hands. For instance, the ability of Columbia administrators to rapidly identify and deactivate student ID cards and lock hundreds of protesters out of their dorms with the flip of a switch was really eye-opening. That would have been far more difficult to do 20 years ago, when I was in school.

But that’s not an AGI issue. That’s a “everyone’s ability to interact with their environment now requires authentication via a central data hub” issue. And its illusionary. Yes, you’re electronically locked out of your dorm, but it doesn’t take a lot of savvy to pop through a door that’s been propped open with a brick by a friend.

There’s also the scenario (suggested by Randall Munroe) that some ambitious oligarch or plutocrat gains control of a system that can manage an army of autonomous killer robots.

I think this fear heavily underweights how much human labor goes into building, maintaining, and repairing autonomous killer robots. The idea that a singular megalomaniac could command an entire complex system - hell, that the commander could even comprehend the system they intended to hijack - presumes a kind of Evil Genius Leader that never seems to show up IRL.

Meanwhile, there’s no shortage of bloodthirsty savages running around Ukraine, Gaza, and Sudan, butchering civilians and blowing up homes with sadistic glee. You don’t need a computer to demonstrate inhumanity towards other people. If anything, its our human-ness that makes this kind of senseless violence possible. Only deep ethnic animus gives you the impulse to diligently march around butchering pregnant women and toddlers, in a region that’s gripped by famine and caught in a deadly heat wave.

Would that all the killing machines were run by some giant calculator, rather than a motley assortment of sickos and freaks who consider sadism a fringe benefit of the occupation.

hmmm . i’m not sure we will be able to give emotion to something that has no needs, no living body, and doesn’t die. maybe. but it seems to me that emotions are survival tools that develop as beings and their environment develop, in order to keep a species alive. i could be wrong.

it’s totally smoke and mirrors. i’m amazed that so many people seem to believe it. for a few things, sure. most things? not a chance in hell.

Regardless of what one might think should happen or expect to happen, the actual psychological effect is harmful to the victim. It’s like if you walked up to someone and said “I’m imagining you naked” that’s still harassment and off-putting to the person, but the image apps have been shown to have much much more severe effects.

It’s like the demonstration where they get someone to feel like a rubber hand is theirs, then hit it with a hammer. It’s still a negative sensation even if it’s not a strictly logical one.

I think half the people who are offended don’t get this.

The other half think that it’s enough to cause hate.

Both arguments rely on enough people being stupid.

Intersting how we can “undress any girl” but I have not seen a tool to “undress any boy” yet. 😐

I don’t know what it says about people developing those tools. (I know, in fact)

Make one :P

Then I suspect you’ll find the answer is money. The ones for women simply just make more money.

This

I’ve seen a tool like that. Everyone was a bodybuilder and Hung like a horse.

I’m going to guess all the ones of women have bolt on tiddies and no pubic hair.

Well of course. Sagging breasts are gross /s

Gotta wonder where they get their horse dick training images from

notices ur instance

Can’t judge though, I have a Chance myself lawl

pff no exotic-erotics?

Not yet! Nothing really tickled my fancy from there that I haven’t already got similar from BD. Haven’t looked in a while, though!

pffff

as if furries are the only ones obsessed with horse dicks

Where does Bad Dragon get them from?

Be the change you wish to see in the world

\s

You probably don’t need them. You can get these photos without even trying. Is a bit of a problem really.

You probably can with the same inpainting stable diffusion tools, it’s just not as heavily advertised.

Probably because the demand is not big or visible enough to make the development worth it, yet.

Lot of people in this thread who don’t seem to understand what sexual exploitation is. I’ve argued about this exact subject on threads like this before.

It is absolutely horrifying that someone you know could take your likeness and render it into a form for their own sexual gratification. It doesn’t matter that it’s ai rendered. The base image is still you, the face in the image is still your face, and you are still the object being sexualized. I can’t describe how disgusting that is. If you do not see the problem in that I don’t know what to tell you. This will be used on images of normal non-famous women. It will be used on pictures from the social media profiles of teenage girls. These ads were on a platform with millions of personal accounts of women and girls. It’s sickening. There is no consent involved here. It’s non-consensual pornography.

The AI angle is just buzzword fearmongering though - this is something you could do with photoshop back in the 90s (and people did, usually with celebrities and with varying levels of quality).

Photoshop was not advertised for its ability to make fake nudes. The purpose of Photoshop is not to make fake nudes. It is a general purpose image editor. These two things are distinctly different.

Middle school boys could not create realistic depiction of their classmates engaged in sex with photoshop. At least not without significant time and effort. Now they can generate hundreds of photos in a matter of minutes.

You didn’t have to come out and tell everyone that you’re one of those guys who doesn’t understand the concept of sexual exploitation and consent.

It literally doesn’t matter what you call this. Colloquially the technology is known as “Generative AI”, and it is fully automating the task of making fake nudes to the point that shady websites only require a single input image, and with a few layers of machine learning, are able to spit out a convincing nude.

It was just as fucked up when perverts sexually exploited people with Photoshop, so I don’t understand what your point here is. “AI” has made sexual exploitation fully automated, and there’s absolutely no excuse for defending this.

so I don’t understand what your point here is

It’s that all the articles over the last year screaming about the dangers of AI because it can be used for something an interested high school student could use an image editor to do 30 years ago but more easily and arguably at somewhat better quality (depending on the person using photoshop) are being ridiculous because they’re blaming the technology instead of the weirdo using it to doctor an image of that girl at their school and pass it around. And yes, anyone who makes and distributes on of these images of someone should be nailed for revenge porn, harassment and whatever else might apply. I say “and distributes” only because if they never distribute it no one would ever know it exists so there would be no opportunity to bust them.

The best use (ie only good use) for one of these is to feed it an image of something that is definitely not the right kind of image for it and seeing what horrors it invents trying to fill in the blanks. Hand it your buddy with a beer belly and a mountain man beard or a dog or garden gnome something.

Generative AI is being used quite prominently for the purposes of making nonconsensual pornography. Just look at the state of CivitAI, the largest marketplace of Stable Diffusion models online. It pretends to be a community for Machine Learning professionals, but behind the scenes it’s laying the groundwork for all of the problems we’re seeing right now. There’s not an actress or female celebrity that doesn’t have a TI or LoRA trained on their likeness - and the galleries don’t hold back on showing you what these models can do.

At least Photoshop never gained the specific reputation of being a tool for making fake porn, but the GenAI community is leaving no doubt that this is a major use case for image models.

Even HuggingFace turns a blind eye to pornifying models and lolicon datasets, and they’re basically the GitHub of AI models…

Knowledge of fission is often applied to make nuclear bombs, but also to generate nuclear power. We shouldn’t blame AI as a whole for this just because some creeps use it for shitty applications.

That’s kinda why I brought up specific key players and how I consider them complicit. If you don’t want AI to be blamed as a whole, you should want those key players to behave ethically, or they’ll poison public perception of AI as a whole.

Wrong. That took time and effort and some level of knowledge from the user, meaning the end product was still somewhat rare. We already know that a decent “AI” image generator can spit these out in seconds with zero skill or knowledge required from the user.

So many of these comments are breaking down into arguments of basic consent for pics, and knowing how so many people are, I sure wonder how many of those same people post pics of their kids on social media constantly and don’t see the inconsistency.

There isn’t really many good reasons to post your kid’s picture anyway.

And yet that seems to be 60% of my wife’s facebook feed… I forbade her from posting our kids years ago.

AI gives creative license to anyone who can communicate their desires well enough. Every great advancement in the media age has been pushed in one way or another with porn, so why would this be different?

I think if a person wants visual “material,” so be it. They’re doing it with their imagination anyway.

Now, generating fake media of someone for profit or malice, that should get punishment. There’s going to be a lot of news cycles with some creative perversion and horrible outcomes intertwined.

I’m just hoping I can communicate the danger of some of the social media platforms to my children well enough. That’s where the most damage is done with the kind of stuff.

The porn industry is, in fact, extremely hostile to AI image generation. How can anyone make money off porn if users simply create their own?

Also I wouldn’t be surprised if the it’s false advertising and in clicking the ad will in fact just take you to a webpage with more ads, and a link from there to more ads, and more ads, and so on until eventually users either give up (and hopefully click on an ad).

Whatever’s going on, the ad is clearly a violation of instagram’s advertising terms.

I’m just hoping I can communicate the danger of some of the social media platforms to my children well enough. That’s where the most damage is done with the kind of stuff.

It’s just not your children you need to communicate it to. It’s all the other children they interact with. For example I know a young girl (not even a teenager yet) who is being bullied on social media lately - the fact she doesn’t use social media herself doesn’t stop other people from saying nasty things about her in public (and who knows, maybe they’re even sharing AI generated CSAM based on photos they’ve taken of her at school).

How can anyone make money off porn if users simply create their own?

What, you mean like amateur porn or…?

Seems like professional porn still does great after over two decades of free internet porn so…

I guess they will solve this one the same way, by having better production quality. 🤷

I think old people are the ones less likely to understand this stuff.

How old? My parents certainly understand this, may great-parants not so much and my son not yet (5yo)

70 or older in my family. My dad’s wife just posted an excited post on Facebook about a Tesla Concorde taking off, and do had to explain to her that it’s a flight simulator. She’s 73.

I see, that is nearly as old as my great parents 😮

youtube has been for like 6 or 7 months. even with famous people in the ads. I remember one for a while with Ortega

Ortega? The taco sauce?

NSFW-ish

I sat down with tacos as I opened up that reply.

Witch.

I guess that’s an OERGAsm

Don’t use that as lube.

Isn’t it kinda funny that the “most harmful applications of AI tools are not hidden on the dark corners of the internet,” yet this article is locked behind a paywall?

Good, let all celebs come together and sue zuck into the ground

Its funny how many people leapt to the defense of Title V of the Telecommunications Act of 1996 Section 230 liability protection, as this helps shield social media firms from assuming liability for shit like this.

Sort of the Heads-I-Win / Tails-You-Lose nature of modern business-friendly legislation and courts.

Section 230 is what allows for social media at all given the problem of content moderation at scale is still unsolved. Take away 230 and no company will accept the liability. But we will have underground forums teeming with white power terrorists signalling, CSAM and spam offering better penis pills and Nigerian princes.

The Google advertising system is also difficult to moderate at scale, but since Google makes money directly off ads, and loses money when YouTube content is not brand safe, Google tends to be harsh on content creators and lenient on advertisers.

It’s not a new problem, and nudification software is just the latest version of X-Ray Specs (which is to say weve been hungry to see teh nekkid for a very long time.) The worst problem is when adverts install spyware or malware onto your device without your consent, which is why you need to adblock Forbes Magazine…or really just everything.

However much of the world’s public discontent is fueled by information on the internet (Some false, some misleading, some true. A whole lot more that’s simultaneously true and heinous than we’d like in our society). So most of our officials would be glad to end Section 230 and shut down the flow of camera footage showing police brutality, or starving people in Gaza or fracking mishaps releasing gigatons of rogue methane into the atmosphere. Our officials would very much love if we’d go back to being uninformed with the news media telling us how it’s sure awful living in the Middle East.

Without 230, we could go back to George W. Bush era methods, and just get our news critical of the White House from foreign sources, and compare the facts to see that they match, signalling our friends when we detect false propaganda.

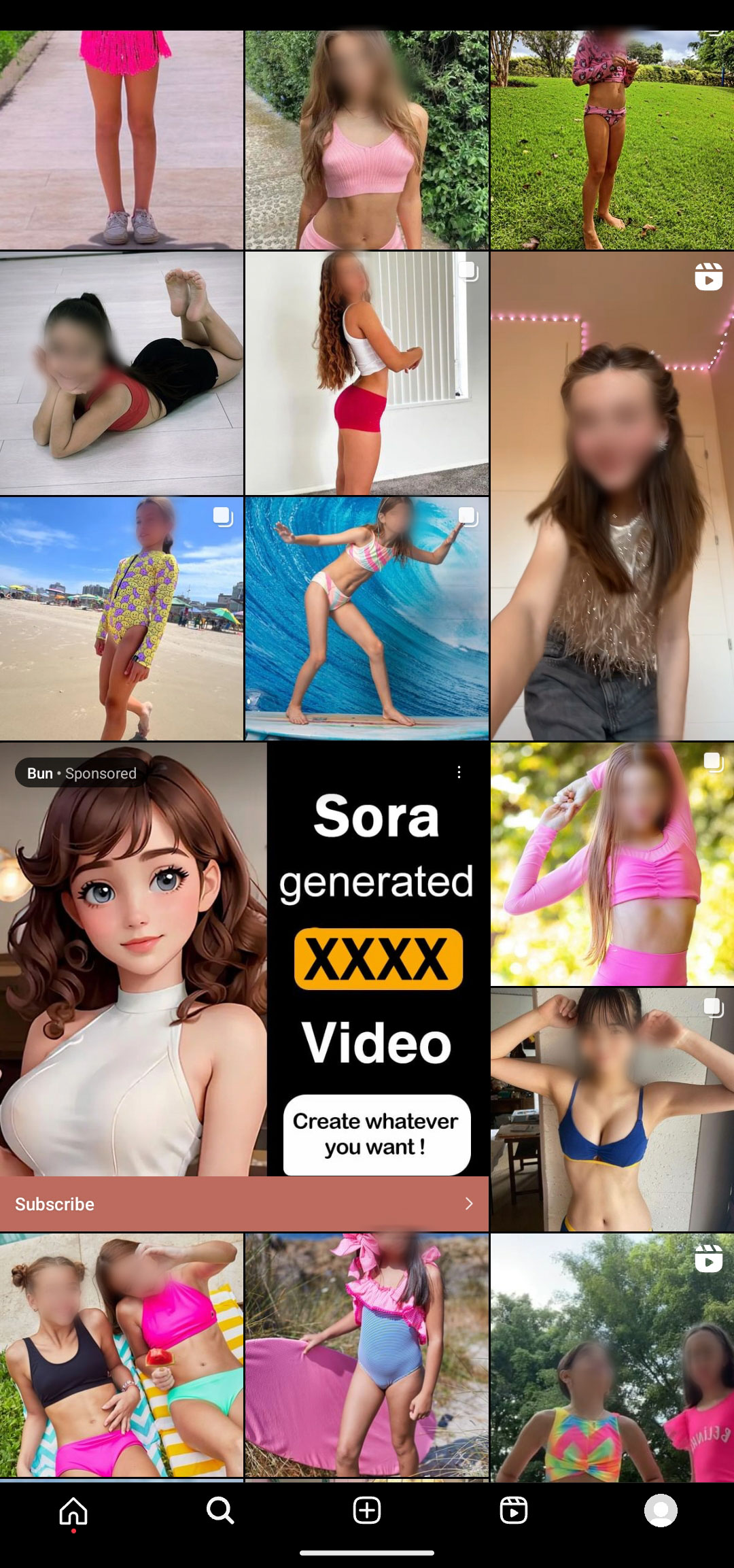

Sharing this screenshot again, to drive the point home.

What in the fuck are all these photos of kids? They’re not part of the ad?

This was from a test I did with a throwaway account on IG where I followed a handful of weirdo parents who run “model” accounts for their kids to see if Instagram would start pushing problematic content as a result (spoiler: yes they will).

It took about 5 minutes from creating the account to end up with nothing but dressed down kids on my recommendations page paired with inappropriate ads. I guess the people who follow kids on IG also like these recommended photos, and the algorithm also figures they must be perverts, but doesn’t care about the sickening juxtaposition of children in swimsuits next to AI nudifying apps.

Don’t use Meta products. They don’t care about ethics, just profits.

The other day, I had an ad on facebook that was basically lolicon. It depicted a clearly underage anime girl in a sexually suggestive position on a motorcycle with their panties almost off. I am in Germany, Facebook knows I am in Germany and if I took a screenshot of that ad and saved it, it would probably be classed as CSAM in my jurisdiction. I reported the ad and got informed that FB found “nothing wrong” with it a few days later. Fuck off, you child predators.

I logged into my throwaway account today just to check in on it since people are talking about this shit more. I was immediately greeted with an ad featuring hardcore pornography, among the pics of kids that still populate my feed.

I’ll spare you the screenshot, but IG is fucked.

okay enough internet for me today

Coupled with the article about pedos blackmailing kids with their fake nudes to get real ones, this makes my stomach turn and eyes water. So much evil in this world. I am happy to say I deleted my FB and IG accounts a few days ago. WhatsApp is tough to leave due to family though… Slowly getting people to switch over to safer and more ethical alternatives.

This 100%. I can’t even bring myself to buy new content for my Quest now that I’m aware of the issues (no matter how much I want the latest Beat Saber and Synth Riders DLC), especially since Meta’s Horizon, in my experience, puts adults into direct contact with children. At first I just dismissed metaverse games like VRChat or Horizon as being too popular with kids for me to enjoy it, but now I realize that it put me, an adult, straight into voice chats with tweens, which people should fucking know better than to do. My first thought was to log off because I wasn’t having fun in a kid-dominated space, but I have no doubt that these apps are crawling with creeps who see that as a feature rather than a problem.

We need education for parents that sharing pictures of their kids online comes with real risks, as does giving kids free reign to use the Internet. The laissez faire attitude many people have towards social media needs to be corrected, because real harm is already being done.

Most of the parents that post untoward pics of their kids online are chasing down opportunities for their kids to model, and they’re ignoring the fact that a significant volume of engagement these photos receive comes from people objectifying children. There seems to be a pattern that the most revealing outfits get the most engagement, and so future pictures are equally if not more revealing to chase more engagement…

Parents might not understand how disturbing these patterns are until they’ve already dumped thousands of pictures online, and at that point they’re likely to be in denial about what they’re exposing their kids to, and/or too invested to want to reverse course.

We also need to have a larger conversation, as a society, about using kids as models at all. Pretty much every major manufacturer of children’s clothing is hiring real kids to model the clothes. I don’t think it’s necessary to be publishing that many pictures of kids online, nor is it acceptable to be doing so for profit. There’s no reason not to limit modeling to adults who can consent to putting their bodies on public display, and using mannequins for kids’ clothing. The sheer volume of kids’ swimsuit and underwear pictures hosted on e-commerce sites is likely a contributor to the capability Generative AI models have to create inappropriate images of children, not to mention the actual CSAM found in the LAION dataset most of these models are trained on.

Sorry for the long rant, this shit pisses me off. I need to consider sending 404 Media everything I know since they’re doing investigations into this kind of thing. My small scale investigation has revealed a lot to me, but more people need to be getting as upset as I am about it if we want to make the Internet less of a hellscape.

Wow, that is really disturbing. WTF, IG?

deleted by creator

My only hope is it also reduces CSAM of real people. Please at least do that much!

That’s what I was hoping too, and I’m sure for some it does, but I also saw an article the other day about people using these to blackmail children into giving real nudes, so fuck me I guess.

I still hope that for the majority of pedos this is solely something used to deter their urges. I’d like at least that much I mean ffs.

Well fuck. The shittiest timeline

No fucking kidding lmao

deleted by creator

What the fuck do you mean no?! This is happening right the fuck now. Its already happening. You DONT want it to decrease the total number actual real children who are used and abused to feed this shit?

I think you think im supporting this in some way. I AM NOT. Im saying i hope that any of the pedos out there are using this instead of taking action against actual children will not have already harmed children or at the very least reduce the total harm done.

Christ what the hell are we coming to if we cant even try to find some fucking sanity in this situation.

And for all that is good and right in the world i also very much hope it doesnt lead to MORE abuse.

Can we at least hope for the best while trying to fix the worst?

The pedos out there are using AI to nudity pictures of real kids. That’s just going to drive up the demand for creep shots and child model photosets to exploit.

There may be a small percentage of offending pedophiles that switch to pure GenAI over pictures of real kids, but I don’t see GenAI ever playing a role in harm reduction given the harm it ultimately enables.

One of the current sickening trends is for a predator to convince a kid to send underwear or swimsuit pics, and then blackmail them into more hardcore photos with nudified versions of the original pics. They’re already seeing an influx of that kind of CSAM online, that involves abusing real kids on social media.

I just wish America was less puritanical and taught kids about sex and boundaries to protect them, and that we had a functioning mental healthcare system that directly helps people who experience inappropriate sexuality attractions like pedophilia before they go down these dark paths.

Look we dont know for sure. Im grasping at silver linings made of straws. I dont care how unlikely it is to be true, but there is a chance.

A chance that some day, months years or decades, we will find out whether or not it didnt work out in best way it could have given whats already happening. But we will get an answer that will be pretty hard to disagree with

And i wont be surpised when it isnt what im hoping it might be. I wont be devastated or have my world view shattered.

Im not naive, im just hoping that we are wrong. Even if is a bit rediculous and theres only evidence to the contrary along the way.

What we know today may not be what we understand next year

Truth is stranger than fiction. We have soo many problems now its fine if we WANT an easy win we wont be able to KNOW the answer to for AN amount of time yet. But only if we are honest with ourselves that just because we want something, doesnt mean its has to happen. I also know that today

But holy hell my guy im fucking grasping that straw. This shit is too bleak and we need something to keep us from taking vigilant action. What we dont need is to stir the pot of fear worry and horror before its time to take action.

If we cant see paths to better places we will have one hell of a hard time recognizing things that will help us get to that path. And if you dont agree we need a new path, it might be too late for you

I feel like you’ve just set me up for an FBI visit.

Send 'em to Zuck.

That makes me sick!! 😠

Google Play and co are allowing similar apps

The idea that the children in this photo are ment to be seen in the same context of a porn site (or at least somthing using the pornhub logo likeness) is discusting.

DISCLAIMER: Ive havent gone throught this myself but know what porn adiction feels like. its not fun and will warp who you are on the inside.

Anyone lured for any reason to this site, DO NOT ENGUAGE it WILL HURT YOU! If for whatever reason theve put their hooks in you and are reeling you in, Use stratigies that Alcoholics Anonimous use. LITERALLY ANYTHING is better than using pictures of REAL CHILDREN for sexual grtification.

deleted by creator

ITT: A bunch of creepy fuckers who dont think society should judge them for being fucking creepy

Am I the only one who doesn’t care about this?

Photoshop has existed for some time now, so creating fake nudes just became easier.

Also why would you care if someone jerks off to a photo you uploaded, regardless of potential nude edits. They can also just imagine you naked.

If you don’t want people to jerk off to your photos, don’t upload any. It happens with and without these apps.

But Instagram selling apps for it is kinda fucked, since it’s very anti-porn, but then sells apps for it (to children).

It’s about consent. If you have no problem with people jerking off to your pictures, fine, but others do.

If you don’t want people to jerk off to your photos, don’t upload any. It happens with and without these apps.

You get that that opinion is pretty much the same as those who say if she didn’t want to be harrassed she shouldn’t have worn such provocative clothing!?

How about we allow people to upload whatever pictures they want and try to address the weirdos turning them into porn without consent, rather than blaming the victims?

Nah it’s more like: If she didn’t want people to jerk off thinking about her, she shouldn’t have worn such provocative clothing.

I honestly don’t think we should encourage uploading this many photos as private people, but that’s something else.

You don’t need consent to jerk off to someone’s photos. You do need consent to tell them about it. Creating images is a bit riskier, but if you make sure no one ever sees them, there is no actual harm done.

If you have no problem with people jerking off to your pictures, fine, but others do

Of course, but people have been doing this since the dawn of time. So unless the plan is to incorporate the Thought Police, there’s no way to actually stop it from happening.

Maybe, but we can certainly help by, amongst many other things, not advertising AI Nude Apps on Instagram. Ultimately what we shouldn’t be doing is blaming the victims by implying they are somehow at fault for having the audacity to upload pictures of themselves to the Internet.

I agree completely with the last part of your comment at least, that other comment unironically saying that it’s the women’s fault for dressing the way they do is bizarre and archaic.

I think it’s clear you have never experienced being sexualized when you weren’t okay with it. It’s a pretty upsetting experience that can feel pretty violating. And as most guys rarely if ever experience being sexualized, never mind when they don’t want to be, I’m not surprised people might be unable to emphasize

Having experienced being sexualized when I wasn’t comfortable with it, this kind of thing makes me kinda sick to be honest. People are used to having a reasonable expectation that posting safe for work pictures online isn’t inviting being sexualized. And that it would almost never be turned into pornographic material featuring their likeness, whether it was previously possible with Photoshop or not.

It’s not surprising people would find the loss of that reasonable assumption discomforting given how uncomfortable it is to be sexualized when you don’t want to be. How uncomfortable a thought it is that you can just be going about your life and minding your own business, and it will now be convenient and easy to produce realistic porn featuring your likeness, at will, with no need for uncommon skills not everyone has

Interesting (wrong) assumption there buddy.

But why would I care how people think of me? If it influences their actions, we gonna start to have problems, tho.

Fair enough, I’m sorry for making assumptions about you.

I do think my points stand though

Also why would you care if someone jerks off to a photo you uploaded, regardless of potential nude edits. They can also just imagine you naked.

Imagining and creating physical (even digial) material are different levels of how real and tangible it feels. Don’t you think?

There is an active act of carefully editing those pictures involved. It’s a misuse and against your intention when you posted such a picture of yourself. You are loosing control by that and become unwillingly part of the sexual act of someone else.

Sure, those, who feel violated by that, might also not like if people imagine things, but that’s still a less “real” level.

For example: Imagining to murder someone is one thing. Creating very explicit pictures about it and watching them regularly, or even printing them and hanging them on the walls of one’s room, is another.

I don’t want to equate murder fantasies with sexual ones. My point is to illustrate that it feels to me and obviously a lot of other people that there are significant differences between pure imagination and creating something tangible out of it.Oh no, hanging the pictures on your wall is fucked.

The difference is if someone else can reasonably find out. If I tell someone that I think about them/someone else while masturbating, that is sexual harassment. If I have pictures on my wall and guests could see them, that’s sexual harassment.

If I just have an encrypted folder, not a problem.

It’s like the difference between thinking someone is ugly and saying it.

deleted by creator

That bidding model for ads should be illegal. Alternatively, companies displaying them should be responsible/be able to tell where it came from. Misinformarion has become a real problem, especially in politics.

Capitalism works! It breeds innovation like this! good luck getting non consensual ai porn in your socialist government