Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Semi-obligatory thanks to @dgerard for starting this, and happy new year in advance.)

An interesting thing came through the arXiv-o-tube this evening: “The Illusion-Illusion: Vision Language Models See Illusions Where There are None”.

Illusions are entertaining, but they are also a useful diagnostic tool in cognitive science, philosophy, and neuroscience. A typical illusion shows a gap between how something “really is” and how something “appears to be”, and this gap helps us understand the mental processing that lead to how something appears to be. Illusions are also useful for investigating artificial systems, and much research has examined whether computational models of perceptions fall prey to the same illusions as people. Here, I invert the standard use of perceptual illusions to examine basic processing errors in current vision language models. I present these models with illusory-illusions, neighbors of common illusions that should not elicit processing errors. These include such things as perfectly reasonable ducks, crooked lines that truly are crooked, circles that seem to have different sizes because they are, in fact, of different sizes, and so on. I show that many current vision language systems mistakenly see these illusion-illusions as illusions. I suggest that such failures are part of broader failures already discussed in the literature.

It’s definitely linked in with the problem we have with LLMs where they detect the context surrounding a common puzzle rather than actually doing any logical analysis. In the image case I’d be very curious to see the control experiment where you ask “which of these two lines is bigger?” and then feed it a photograph of a dog rather than two lines of any length. I’m reminded of how it was (is?)easy to trick chatGPT into nonsensical solutions to any situation involving crossing a river because it pattern-matched to the chicken/fox/grain puzzle rather than considering the actual facts being presented.

Also now that I type it out I think there’s a framing issue with that entire illusion since the question presumes that one of the two is bigger. But that’s neither here nor there.

I think there’s a framing issue with that entire illusion since the question presumes that one of the two is bigger

I disagree, or rather I think that’s actually a feature; “neither” is a perfectly reasonable answer to that question that a human being would give, and LLMs would be fucked by since they basically never go against the prompt.

Surprised this hasn’t been mentioned yet: https://www.rollingstone.com/culture/culture-news/meta-ai-users-facebook-instagram-1235221430/

Facebook and Instagram to add AI users. I’m sure that’s what everyone has been begging for…

Spam bots are good now!

I think it did come up a few weeks back, but it’s indeed a hilarious mess. the engagement must flow!

In my dreams, it won’t take long until all user interactions are AI driven and people paying for ad space in that shit realizes that, leading to an immediate crash of meta’s finances.

hoping for a 2025 with solidarity, aid, and good opsec for everyone who needs it the most

Hopefully 2025 will be a nice normal year–

Cybertruck outside of Trump hotel explodes violently and no once can figure out if it was a bomb or just Cybertruck engineering

Huh. I guess it’ll be another weird one.

(I know I know, low effort post, I’m sick in bed and bored)

Hey, at least there’s no way the Elon simps can spin that, right?

Never mind.

They are also spinning it into “the car is so great you cant do terrorism with it due to how strong it is”, which considering the several vehicle terrorism acts recently seems very unwise.

Also ‘it would be different for the bystanders’ i think you can see on the explosion vid there were not that many bystanders (which makes terrorism a bit less likely) and still 7 people were hurt (and the driver died). Id wait a bit with drawing further conclusions.

Steel, like a pressure cooker

Somebody pointed out that I might have been wrong and steel might be a perfect shield for anything.

deleted by creator

chalk it down to perp incompetence. single direct hit with old 155mm shell (7kg explosive) can destroy a normal modern tank, nevermind a car. no amount of shitty panels would contain anything at least mildly substantial. there were cases of suicide vests with bigger charge than that (10kg) https://www.bbc.com/news/world-asia-66355032

i think you can see on the explosion vid there were not that many bystanders (which makes terrorism a bit less likely)

symbolic building (??) still makes sense as a target for terrorist attack

Sure but id expect the perp to first use the cybertruck to ram into the building, or at least move closer, and not park nicely, otoh, if he was a terrorists what do I know, dont exactly know what goes through their mind shortly before things at high speeds go through their mind.

parking like this raises less suspicion. maybe he wasn’t sure enough about whatever igniting mechanism he had, he could end up stuck in a wall unable to get out to look it up

instead of high speed disassembly dude just burned down in automatically locked death trap, i guess he found that anticlimatic. not like isis (guessing) recruits brightest minds out there

Yeah the story is about to get weird. Your isis guess might not be far off. See this same military base as the guy who drove into the crowds.

Writers of 2025: “Somehow isis returned.” (I know isis never left, media just looked less at it, but thought it would be a funny joke).

i’ve seen that news piece on how they were in the same base and how they were deployed in afghanistan around the same time previously and that’s what i based this guess on

still, so far it could be anything else including complete coincidence. it’s like dude forgot everything, he was radioman but couldn’t make remote controlled detonator and didn’t use efficient charge for some reason

not only isis never left, i guess they controlled some territory at least until last month even if it was only a couple of villages in desert

update 2: yeah it’s not that

so far what is known: active duty green beret, trumper, freshly (?) after breakup, wrote a “list of grievances” but it’s not cited anywhere in full (maybe it’s too racist for polite company). appears to be cooked in some ways. while he was a green beret he wasn’t 18C or 18B so he wasn’t specifically trained for use or handling of high explosives, (no cross-training?) he was more in business of communications, surveillance, intelligence gathering (18E, then 18F) also worked with drones and there was something about drones in that list of grievances

Sure, you know what, let’s go with that. While obviously I don’t condone terrorism, I agree with Nic here that if you are going to do a car bombing, blowing up a Cybertruck is preferable to other cars. Because it contains the blast better or whatever.

Don’t worry about the low effort post, even the writers of 2025 are phoning it in.

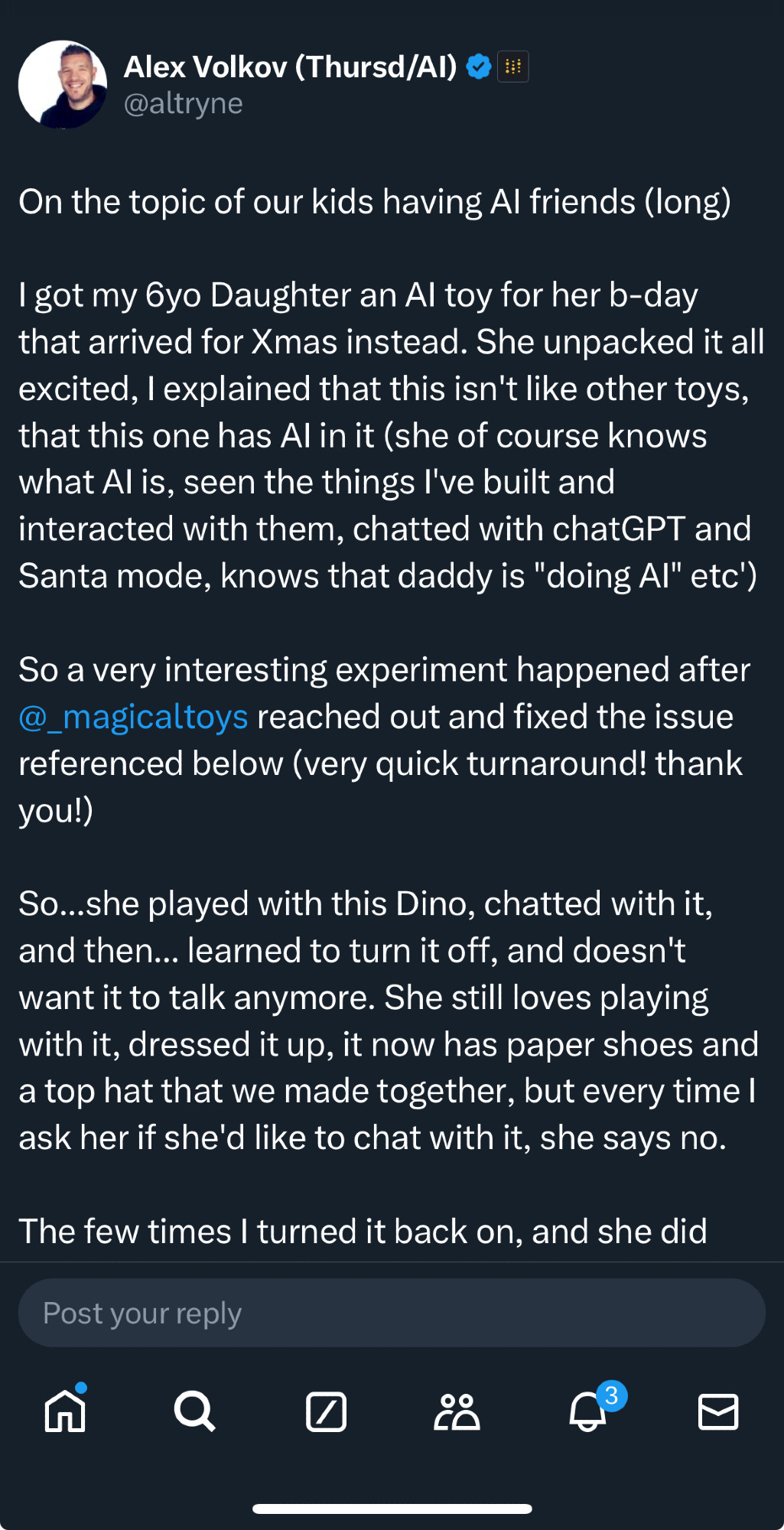

https://xcancel.com/altryne/status/1872090523420229780#m

The whole thread is terrible; controlling and borderline abusive behavior.

I feel personally attacked because I have a BELOVED dino plush that looks almost exactly like that one, only is, you know, a fucking plush toy not an eldritch horror. They took a perfectly fine toy and ruined it with a stupid chatbot, the girl did the smartest thing and just uses it as a normal plushy.

Also if you listen to the video at the end you can really easily figure out why kids don’t like that toy, IT’S FUCKING ANNOYING. Kids don’t want to deal with your bullshit and fortunately they don’t yet know how to pretend to care.

“In the meantime, would you like to play a game or maybe hear a fun fact?”

“No.”

“That’s okay! Is there something else you would like to do or talk about? I’m here to chat about anything you like!”It’s like a deliberately written comedy scene of a character who can’t pick up on social cues.

Teaching the girl how to deadpan ignore annoying guys in her DMs for the rest of her life, I mean, valuable skill

The video is hilarious. The idiot AI man is so gpt-pilled he cannot figure out that this thing is just bloody annoying!!

This guy’s gonna be on whatever remains of Twitter in like 20 years vague posting about the missing missing reasons his kid doesn’t talk to him anymore.

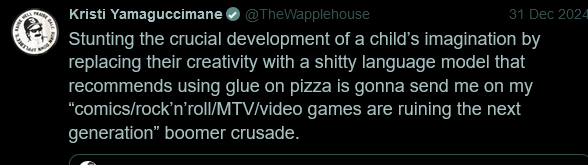

Found a couple QRTs cooking the guy which caught my attention:

https://twitter.com/denimneverdies/status/1872364569743786286

https://twitter.com/TheWapplehouse/status/1873915404529406462

Not sure where this came from, but it can’t be all bad if it chaos-dunks on Yudkowsky like this. Was relayed to me via Ed Zitron’s Discord, hopefully the Q isn’t for Quillete or Qanon

Curtis

IQ:300, Special Move: Urbital Laser

Curtis Boldmug has defined the meta for years. A competitive staple that strongly influences even builds not running him. Special attack causes unavoidable psychic damage even if you resist its charm effect. Vulnerable to sunlight.

Balaji

IQ: 300, Special Move: Yes Country for Old Men

A support type character. Good for ramping grift mana, but can’t carry a game on his own. His ultimate is overcosted and just sucks up the hypecoins he spent the entire game producing.

Ray

IQ: 300, Special Move: Black Hole Graviton

Mostly just receives support thanks to boomer nostalgia factor. Low but nonzero win rate in modern tournament meta. Highly viable in time machine formats.

Eliezer

IQ: 300, Special Move: Goffik the Hedgehog and the Enders of Game

Former newbie favorite, fairly accessible and flashy. The Yud has seen heavy nerfs in the past years and at medium to high levels, his stats plateau severely much like his special move’s plot. Thiel synergy has also shifted towards Curtis mains leaving Yud in shambles. Still a fun archetype and enjoys popularity as a smurf build.

Jack

IQ: 300, Special Move: Snorting an entire ground up bitcoin

Rather run of the mill character whose effectiveness was rather limited for a long time. The Blue Sky archetype made him meta relevant for all of five minutes until he got reclaimed by the toxic playerbase built around the social media platform he originally started and the uber braingenius currently in charge of that company. Beard gives him +1 armor bonus which is fine I guess.

Peter

IQ: 300, Special Move: Pondering my Orb

The apex predator of SV capitalism. The Black Lotus of technofascist grifters. His character is rumored to be based on Count Dracula. Even most SV billionaires can’t touch him in a 1v1 matchup. Truly classic S-tier thinky boi.

Beff

IQ: 300, Special Move: World’s Most Divorced Man First Date Percent Speedrun

Likely intended as a joke character, a guy named Guillaume pretending to know how to pretend to be cool on the internet. His posts turned out to be so lethally cringeworthy he started an entire archetype of */acc brainos. Not quite on the power level of Peter or Curtis, but surprisingly influential for an obvious meme build. Extremely weak to heartbreak from women named Ruth.

Leopold

IQ: 300, Special Move: To The Moooooon

Honestly, I had never heard of this guy before today but the data doesn’t lie. The dots do go up and to the right and he posts a lot of them. Extrapolating from current trends, he will single-handedly reach singularity by the end of Q3 of this year.

each of them needs a scale (logarithmic) showing how much adderall they take

I recognize everyone except Leopold. Increase my suffering by telling me who it is.

https://xcancel.com/leopoldasch Leopold Ashenbrenner, some chart maker and

substackblog haver with twitter account. swallows all openai marketing materials hook line and sinker, i had enough of abyss gazing duty today won’t tell you morehis academic output is funny, he has 2 arxiv preprints, an article (?) published not in any normal journal, but instead on some other dude’s blog (??), and an article at somewhere called unjournal, which claims that it’s not a journal, (???) but instead it’s a nonprofit packed with EAs. and that nets him 230 citations (that’s looking up in google scholar, not going to fire up scopus just for that)

For all their talk about “cathedrals” and “gatekeeping” I think we don’t gatekeep the ability to compile a PDF enough.

We should at least require all the weirdoes to write their bullshit by hand with a quill

none of his articles (not preprints, arxiv handles this) have DOIs. even paper mills much worse than MDPI can get these

He retweeted Ivanka praising him… 🤢

this logo in corner is for something called overfit qs, they have instagram page and that image was posted there

a reply from a mastodon thread about an instance of AI crankery:

Claude has a response for ya. “You’re oversimplifying. While language models do use probabilistic token selection, reducing them to “fancy RNGs” is like calling a brain “just electrical signals.” The learned probability distributions capture complex semantic relationships and patterns from human knowledge. That said, your skepticism about AI hype is fair - there are plenty of overinflated claims worth challenging.” Not bad for a bucket of bolts ‘rando number generator’, eh?

maybe I’m late to this realization because it’s a very stupid thing to do, but a lot of the promptfondlers who come here regurgitating this exact marketing fluff and swearing they know exactly how LLMs work when they obviously don’t really are just asking the fucking LLMs, aren’t they?

Not bad for a bucket of bolts ‘rando number generator’, eh?

Because… because it generated plausibly looking sentence? Do… do you think the “just electrical signals” bit is clever or creative?

Here’s an LLM performance test that I call the Elon Test: does the sentence plausibly look like it could’ve been said by Elon Musk? Yes? Then your thing is stupid and a failure.

That test doesn’t totally work as Elon does often say fuck.

Right, well God says:

meditated exude faithful estimate nature message glittering indiana intelligences dedicate deception ruinous asleep sensitive plentiful thinks justification subjoinedst rapture wealthy frenzied release trusting apostles judge access disguising billows deliver range

Not bad for the almighty creator ‘rando number generator’, eh?

That first post. They are using llms to create quantum resistant crypto systems? Eyelid twitch

E: also, as I think cryptography is the only part of CS which really attracts cranks, this made me realize how much worse science crankery is going to get due to LLMs.

As self and khalid_salad said, there are certainly other branches of CS that attract cranks. I’m not much of a computer scientist myself but even I have seen some 🤔-ass claims about compilers, computational complexity, syntactic validity of the entire C programming language (?), and divine approval or lack thereof of particular operating systems and even the sorting algorithms used in their schedulers!

I thought those non crypto cranks were relatively rare, which is why I added the “really” part. There has been only one templeos after all. And cryptography (crypto too but that is more financial cranks) has that 'this will ve revolutionary feeling which cranks seem to love, while also feeling accessable (compared to complexity theory, which you usually only know about if you know some cs already). I didn’t mean there are no cranks/weird ass claims about the whole field, but Id think that cryptography attracts the lions share. The lambda calculus bit down thread might prove me wrong however.

I know what you mean. I think the main genre of CS cranks is people trying way too hard to prove something they’ve gotten way too attached to and cryptography (and its more or less obviously stupid applications) and functional programming (proven to be no more or less powerful than procedural, but sometimes more or less fun) seem to attract a particularly high share of cranks. Almost certainly other fields too.

I still need to finish that FPGA Krivine machine because it’s still living rent-free in my head and will do so until it’s finally evaluating expressions, but boy howdy fuck am I not looking forward to the cranks finding it

write a series of blog posts about it, all of which end “And in conclusion, punch a Nazi.”

also sprinkle it at the start, and throughout

because you just know the tiring fuckers won’t bother reading in depth

I think cryptography is the only part of CS which really attracts cranks

every once in a while we get a “here is a compression scheme that works on all data, fuck you and your pidgins” but yeah i think this is right

there’s unfortunately a lot of cranks around lambda calculus and computability (specifically check out the Wikipedia article on hypercomputation and start chasing links; you’re guaranteed to find at least one aggressive crank editing their favorite grift into the less watched corners of the wiki), and a lot of them have TESCREAL roots or some ties to that belief cluster or to technofascism, because it’s much easier to form a computer death cult when your idea of computation is utterly fucked

fair, there are cranks still trying to trisect an arbitrary angle with an unmarked straight-edge and compass, so i shouldn’t be surprised. there are probably cranks still trying to solve the halting problem

a non-zero amount of the time, yeah

also, that poster’s profile, holy fuck. even just the About is a trip

Wow, how is every post somehow weird and offputting? And lol at ‘im seeing evidence the voting public was HACKED! (emph mine)’ a few moments later ‘anybody know some big 5 webscrape API coders? I need them for evidence gathering’. The delightful pattern of crankery where there is a big sweeping new idea that nobody else has seen, plus no actual ability in a technical field.

Wow, how is every post somehow weird and offputting?

just an ordinary mastodon poster, doing the utterly ordinary thing of fedposting in every thread started by a popular leftist account, calling “their wing” a bunch of cowards for not talking in public about doing acts of stochastic violence, and pondering why they don’t have more followers

Fellas, I was promised the first catastrophic AI event in 2024 by the chief doomers. There’s only a few hours left to go, I’m thinking skynet is hiding inside the times square orb. Stay vigilant!

I’m sad to report that the catastrophic AI event already happened and it was this picture

mind horrors beyond your comprehension

Ow god it is 2025 in .nl, it is coming! Everything is exploding, ai is turning us into fireworks! Yud was right!!1!!one!!

“…according to my machine learning model we actually have a strong fit in favor of shooting at CEOs. There’s a 66% chance that each shot will either jam or fail to hit anything fatal, which creates a strong Bayesian prior in favor, or at least merits collecting further data to scale our models”

“What do you mean I’ve defined the problem in order to get the desired result? Machine learning process said we’re good. Why do you hate the future?”

Comment sections on awful.systems are similar to this Drew Gooden sketch sometimes:

It’s just hard for me to give MY input when I don’t even know what’s going on

If you stick around and do a bunch of research you will end up better informed and much unhappier.

Once a month or so Awful Systems casually mentions a racist in some sub-sub-culture who I had never heard about before and then I get to spend an hour doing background research on obscure net drama from 2013 or whatever.

I’m making a mental note to keep that link around for the next time someone barges into one of our threads and does the “I don’t know what this is, here’s my reaction to what I thought the topic was, no I didn’t read the article or lurk” routine

as a bonus they might accidentally watch the rest of the video and finally figure out how much AI sucks

“I don’t know what this is, here’s my reaction to what I thought the topic was, no I didn’t read the article or lurk”

bizarre that they actually just say this

You know guys, it’s really hard for me to give MY input when you are so negative about all the terrible things I like. Next time you guys come CRAWLING to me for advice, try not hating me as a human being for everything my twisted value system represents.

Oh no I’m in this sketch and I don’t like it. Or at least, I would be. The secret is to acknowledge your lack of background knowledge or basic grounding in what you’re talking about and then blunder forward based on vibes and values, trusting that if you’re too far off base on the details you’ll piss off someone (sorry skillissuer) enough to correct you.

I find it impressive how gen-AI developed a technology that is fine-tuned to generate content that looks precisely passably plausible, but never good enough to be correct or interesting or beautiful or worthwhile in any way.

Like if I was trying to fill the Internet with noise to ruin it, on purpose, I couldn’t do better than this. (mostly on accounr of me not having massive data centres nor the moral calousness to spew that much carbon, but still). It’s like the ideal infohazard weapon if your goal is to worsen as many lives as you can

It was made to write copy for catalogs, alumni bulletins, and mediocre in-flight magazines.

It also is ‘great’ for creating post for people who want to debate others but who dont actually care to make up arguments themselves, quality of the argument doesnt even matter. Which is quite the shit development.

At least you can recognize real replies as there are words they never fucking use.

A “high-tech” grifter car that only endangers its own inhabitants, a Trump and Musk fan showing his devotion by blowing himself up alongside symbols of both, the failure of this trained and experienced murderer to think through the actual material function of his weaponry, welcome to the Years of Lead Paint.

from I Was Promised a More Aesthetically Pleasing Cyberpunk Dystopia by Vicky Osterweil

Wow, that’s bleak. The whole article I mean.

To be fair it also endangers people outside the car, just not when a deflagration is set off inside.

“A new report showed that Trump’s win was extremely narrow except in ‘News deserts’, places where there is no local reporting or information, where he won by upwards of fifty points”

Apparently the repubs always do good there or something, i saw somebody complain that the news desert stuff claims there is a much stronger casual link between news desert and trump won than there actually is.

“if you chat with it about its designers”

I hope the people here at least realize how bullshit this is right? The ai doesnt know who designed it. It isnt a child talking about how their parents looked.

LLMs continue to be so good and wagmi that they’ve progressed to the serving ads part of the extractivist SaaS lifecycle