ai excels at some specific tasks. the chatbots they push us to are a gimmick rn.

Reminds me of “biotech is Godzilla”. Sepultura version of course

The biggest problem with AI is that they’re illegally harvesting everything they can possibly get their hands on to feed it, they’re forcing it into places where people have explicitly said they don’t want it, and they’re sucking up massive amounts of energy AMD water to create it, undoing everyone else’s progress in reducing energy use, and raising prices for everyone else at the same time.

Oh, and it also hallucinates.

In a Venn Diagram, I think your “illegally harvesting” complaint is a circle fully inside the “owned by the same few people” circle. AI could have been an open, community-driven endeavor, but now it’s just mega-rich corporations stealing from everyone else. I guess that’s true of literally everything, not just AI, but you get my point.

Eh I’m fine with the illegal harvesting of data. It forces the courts to revisit the question of what copyright really is and hopefully erodes the stranglehold that copyright has on modern society.

Let the companies fight each other over whether it’s okay to pirate every video on YouTube. I’m waiting.

So far, the result seems to be “it’s okay when they do it”

Yeah… Nothing to see here, people, go home, work harder, exercise, and don’t forget to eat your vegetables. Of course, family first and god bless you.

I would agree with you if the same companies challenging copyright (protecting the intellectual and creative work of “normies”) are not also aggressively welding copyright against the same people they are stealing from.

With the amount of coprorate power tightly integrated with the governmental bodies in the US (and now with Doge dismantling oversight) I fear that whatever comes out of this is humans own nothing, corporations own everything. Death of free independent thought and creativity.

Everything you do, say and create is instantly marketable, sellable by the major corporations and you get nothing in return.

The world needs something a lot more drastic then a copyright reform at this point.

AI scrapers illegally harvesting data are destroying smaller and open source projects. Copyright law is not the only victim

https://thelibre.news/foss-infrastructure-is-under-attack-by-ai-companies/

They’re not illegally harvesting anything. Copyright law is all about distribution. As much as everyone loves to think that when you copy something without permission you’re breaking the law the truth is that you’re not. It’s only when you distribute said copy that you’re breaking the law (aka violating copyright).

All those old school notices (e.g. “FBI Warning”) are 100% bullshit. Same for the warning the NFL spits out before games. You absolutely can record it! You just can’t share it (or show it to more than a handful of people but that’s a different set of laws regarding broadcasting).

I download AI (image generation) models all the time. They range in size from 2GB to 12GB. You cannot fit the petabytes of data they used to train the model into that space. No compression algorithm is that good.

The same is true for LLM, RVC (audio models) and similar models/checkpoints. I mean, think about it: If AI is illegally distributing millions of copyrighted works to end users they’d have to be including it all in those files somehow.

Instead of thinking of an AI model like a collection of copyrighted works think of it more like a rough sketch of a mashup of copyrighted works. Like if you asked a person to make a Godzilla-themed My Little Pony and what you got was that person’s interpretation of what Godzilla combined with MLP would look like. Every artist would draw it differently. Every author would describe it differently. Every voice actor would voice it differently.

Those differences are the equivalent of the random seed provided to AI models. If you throw something at a random number generator enough times you could–in theory–get the works of Shakespeare. Especially if you ask it to write something just like Shakespeare. However, that doesn’t meant the AI model literally copied his works. It’s just doing it’s best guess (it’s literally guessing! That’s how work!).

The problem with being like… super pedantic about definitions, is that you often miss the forest for the trees.

Illegal or not, seems pretty obvious to me that people saying illegal in this thread and others probably mean “unethically”… which is pretty clearly true.

I wasn’t being pedantic. It’s a very fucking important distinction.

If you want to say “unethical” you say that. Law is an orthogonal concept to ethics. As anyone who’s studied the history of racism and sexism would understand.

Furthermore, it’s not clear that what Meta did actually was unethical. Ethics is all about how human behavior impacts other humans (or other animals). If a behavior has a direct negative impact that’s considered unethical. If it has no impact or positive impact that’s an ethical behavior.

What impact did OpenAI, Meta, et al have when they downloaded these copyrighted works? They were not read by humans–they were read by machines.

From an ethics standpoint that behavior is moot. It’s the ethical equivalent of trying to measure the environmental impact of a bit traveling across a wire. You can go deep down the rabbit hole and calculate the damage caused by mining copper and laying cables but that’s largely a waste of time because it completely loses the narrative that copying a billion books/images/whatever into a machine somehow negatively impacts humans.

It is not the copying of this information that matters. It’s the impact of the technologies they’re creating with it!

That’s why I think it’s very important to point out that copyright violation isn’t the problem in these threads. It’s a path that leads nowhere.

Just so you know, still pedantic.

The irony of choosing the most pedantic way of saying that they’re not pedantic is pretty amusing though.

The issue I see is that they are using the copyrighted data, then making money off that data.

…in the same way that someone who’s read a lot of books can make money by writing their own.

I hate to be the one to break it to you but AIs aren’t actually people. Companies claiming that they are “this close to AGI” doesn’t make it true.

The human brain is an exception to copyright law. Outsourcing your thinking to a machine that doesn’t actually think makes this something different and therefore should be treated differently.

Do you know someone who’s read a billion books and can write a new (trashy) book in 5 mins?

deleted by creator

Part of my point is that a lot of everyday rules do break down at large scale. Like, ‘drink water’ is good advice - but a person can still die from drinking too much water. And having a few people go for a walk through a forest is nice, but having a million people go for a walk through a forest is bad. And using a couple of quotes from different sources to write an article for a website is good; but using thousands of quotes in an automated method doesn’t really feel like the same thing any more.

That’s what I’m saying. A person can’t physically read billions of books, or do the statistical work to put them together to create a new piece of work from them. And since a person cannot do that, no law or existing rule currently takes that possibility into account. So I don’t think we can really say that a person is ‘allowed to’ do that. Rather, it’s just an undefined area. A person simply cannot physically do it, and so the rules don’t have to consider it. On the other hand, computer systems can now do it. And so rather than pointing to old laws, we have to decide as a society whether we think that’s something we are ok with.

I don’t know what the ‘best’ answer is, but I do think we should at least stop to think about it carefully; because there are some clear downsides that need to be considered - and probably a lot of effects that aren’t as obvious which should also be considered!

This is an interesting argument that I’ve never heard before. Isn’t the question more about whether ai generated art counts as a “derivative work” though? I don’t use AI at all but from what I’ve read, they can generate work that includes watermarks from the source data, would that not strongly imply that these are derivative works?

If you studied loads of classic art then started making your own would that be a derivative work? Because that’s how AI works.

The presence of watermarks in output images is just a side effect of the prompt and its similarity to training data. If you ask for a picture of an Olympic swimmer wearing a purple bathing suit and it turns out that only a hundred or so images in the training match that sort of image–and most of them included a watermark–you can end up with a kinda-sorta similar watermark in the output.

It is absolutely 100% evidence that they used watermarked images in their training. Is that a problem, though? I wouldn’t think so since they’re not distributing those exact images. Just images that are “kinda sorta” similar.

If you try to get an AI to output an image that matches someone else’s image nearly exactly… is that the fault of the AI or the end user, specifically asking for something that would violate another’s copyright (with a derivative work)?

Sounds like a load of techbro nonsense.

By that logic mirroring an image would suffice to count as derivative work since it’s “kinda sorta similar”. It’s not the original, and 0% of pixels match the source.

“And the machine, it learned to flip the image by itself! Like a human!”

It’s a predictive keyboard on steroids, let’s not pretent that it can create anything but noise with no input.

Oh, and it also hallucinates.

Oh, and people believe the hallucinations.

I see the “AI is using up massive amounts of water” being proclaimed everywhere lately, however I do not understand it, do you have a source?

My understanding is this probably stems from people misunderstanding data center cooling systems. Most of these systems are closed loop so everything will be reused. It makes no sense to “burn off” water for cooling.

data centers are mainly air-cooled, and two innovations contribute to the water waste.

the first one was “free cooling”, where instead of using a heat exchanger loop you just blow (filtered) outside air directly over the servers and out again, meaning you don’t have to “get rid” of waste heat, you just blow it right out.

the second one was increasing the moisture content of the air on the way in with what is basically giant carburettors in the air stream. the wetter the air, the more heat it can take from the servers.

so basically we now have data centers designed like cloud machines.

Edit: Also, apparently the water they use becomes contaminated and they use mainly potable water. here’s a paper on it

Also the energy for those datacenters has to come from somewhere and non-renewable options (gas, oil, nuclear generation) also use a lot of water as part of the generation process itself (they all relly using the fuel to generate the steam to power turbines which generate the electricity) and for cooling.

steam that runs turbines tends to be recirculated. that’s already in the paper.

Oh, and it also hallucinates.

This is arguably a feature depending on how you use it. I’m absolutely not an AI acolyte. It’s highly problematic in every step. Resource usage. Training using illegally obtained information. This wouldn’t necessarily be an issue if people who aren’t tech broligarchs weren’t routinely getting their lives destroyed for this, and if the people creating the material being used for training also weren’t being fucked…just capitalism things I guess. Attempts by capitalists to cut workers out of the cost/profit equation.

If you’re using AI to make music, images or video… you’re depending on those hallucinations.

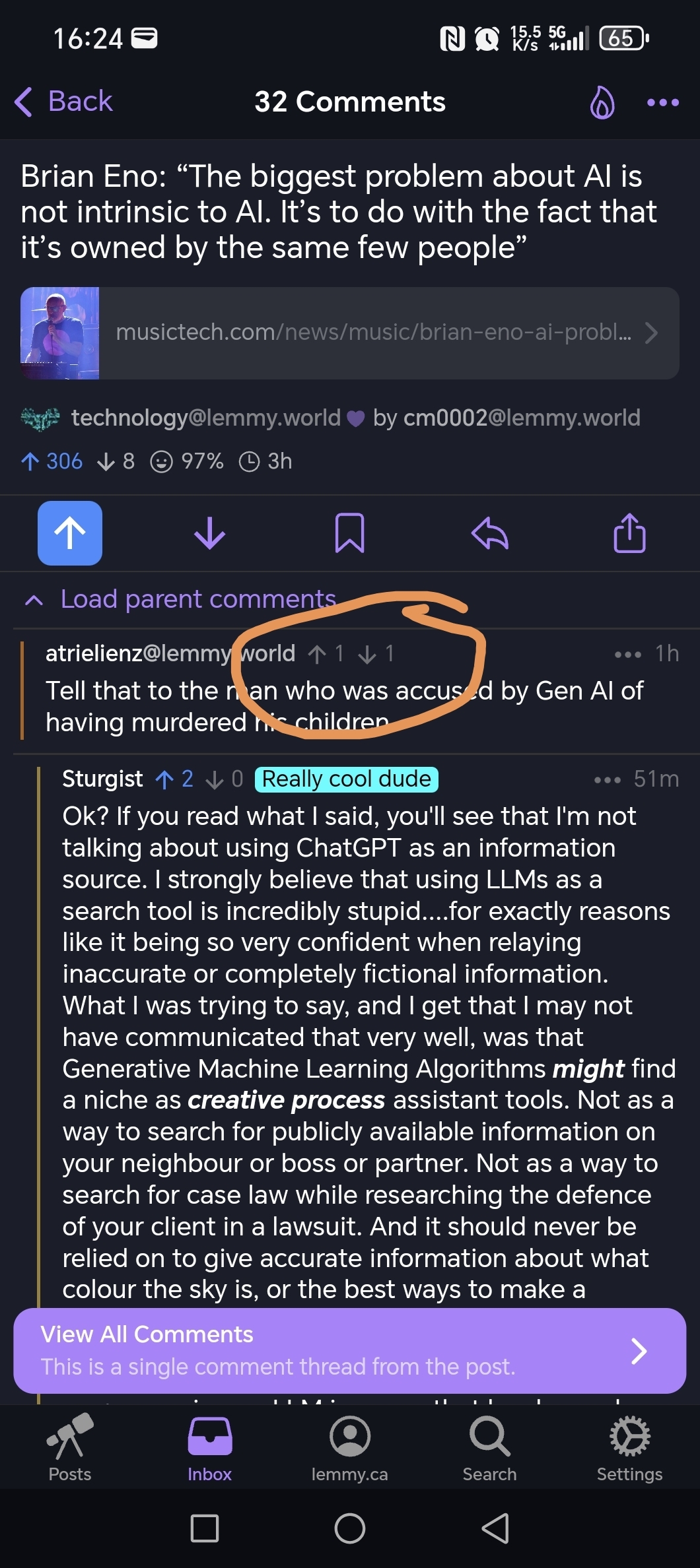

I run a Stable Diffusion model on my laptop. It’s kinda neat. I don’t make things for a profit, and now that I’ve played with it a bit I’ll likely delete it soon. I think there’s room for people to locally host their own models, preferably trained with legally acquired data, to be used as a tool to assist with the creative process. The current monetisation model for AI is fuckin criminal…Tell that to the man who was accused by Gen AI of having murdered his children.

Ok? If you read what I said, you’ll see that I’m not talking about using ChatGPT as an information source. I strongly believe that using LLMs as a search tool is incredibly stupid…for exactly reasons like it being so very confident when relaying inaccurate or completely fictional information.

What I was trying to say, and I get that I may not have communicated that very well, was that Generative Machine Learning Algorithms might find a niche as creative process assistant tools. Not as a way to search for publicly available information on your neighbour or boss or partner. Not as a way to search for case law while researching the defence of your client in a lawsuit. And it should never be relied on to give accurate information about what colour the sky is, or the best ways to make a custard using gasoline.Does that clarify things a bit? Or do you want to carry on using an LLM in a way that has been shown to be unreliable, at best, as some sort of gotcha…when I wasn’t talking about that as a viable use case?

lol. I was just saying in another comment that lemmy users 1. Assume a level of knowledge of the person they are talking to or interacting with that may or may not be present in reality, and 2. Are often intentionally mean to the people they respond to so much so that they seem to take offense on purpose to even the most innocuous of comments, and here you are, downvoting my valid point, which is that regardless of whether we view it as a reliable information source, that’s what it is being marketed as and results like this harm both the population using it, and the people who have found good uses for it. And no, I don’t actually agree that it’s good for creative processes as assistance tools and a lot of that has to do with how you view the creative process and how I view it differently. Any other tool at the very least has a known quantity of what went into it and Generative AI does not have that benefit and therefore is problematic.

and here you are, downvoting my valid point

Wasn’t me actually.

valid point

You weren’t really making a point in line with what I was saying.

regardless of whether we view it as a reliable information source, that’s what it is being marketed as and results like this harm both the population using it, and the people who have found good uses for it. And no, I don’t actually agree that it’s good for creative processes as assistance tools and a lot of that has to do with how you view the creative process and how I view it differently. Any other tool at the very least has a known quantity of what went into it and Generative AI does not have that benefit and therefore is problematic.

This is a really valid point, and if you had taken the time to actually write this out in your first comment, instead of “Tell that to the guy that was expecting factual information from a hallucination generator!” I wouldn’t have reacted the way I did. And we’d be having a constructive conversation right now. Instead you made a snide remark, seemingly (personal opinion here, I probably can’t read minds) intending it as an invalidation of what I was saying, and then being smug about my taking offence to you not contributing to the conversation and instead being kind of a dick.

Not everything has to have a direct correlation to what you say in order to be valid or add to the conversation. You have a habit of ignoring parts of the conversation going around you in order to feel justified in whatever statements you make regardless of whether or not they are based in fact or speak to the conversation you’re responding to and you are also doing the exact same thing to me that you’re upset about (because why else would you go to a whole other post to “prove a point” about downvoting?). I’m not going to even try to justify to you what I said in this post or that one because I honestly don’t think you care.

It wasn’t you (you claim), but it could have been and it still might be you on a separate account. I have no way of knowing.

All in all, I said what I said. We will not get the benefits of Generative AI if we don’t 1. deal with the problems that are coming from it, and 2. Stop trying to shoehorn it into everything. And that’s the discussion that’s happening here.

because why else would you go to a whole other post to “prove a point” about downvoting?

It wasn’t you (you claim)I do claim. I have an alt, didn’t downvote you there either. Was just pointing out that you were also making assumptions. And it’s all comments in the same thread, hardly me going to an entirely different post to prove a point.

We will not get the benefits of Generative AI if we don’t 1. deal with the problems that are coming from it, and 2. Stop trying to shoehorn it into everything. And that’s the discussion that’s happening here.

I agree. And while I personally feel like there’s already room for it in some people’s workflow, it is very clearly problematic in many ways. As I had pointed out in my first comment.

I’m not going to even try to justify to you what I said in this post or that one because I honestly don’t think you care.

I do actually! Might be hard to believe, but I reacted the way I did because I felt your first comment was reductive, and intentionally trying to invalidate and derail my comment without actually adding anything to the discussion. That made me angry because I want a discussion. Not because I want to be right, and fuck you for thinking differently.

If you’re willing to talk about your views and opinions, I’d be happy to continue talking. If you’re just going to assume I don’t care, and don’t want to hear what other people think…then just block me and move on. 👍

We spend energy on the most useless shit why are people suddenly using it as an argument against AI? You ever saw someone complaining about pixar wasting energies to render their movies? Or 3D studios to render TV ads?

Well, the harvesting isn’t illegal (yet), and I think it probably shouldn’t be.

It’s scraping, and it’s hard to make that part illegal without collateral damage.

But that doesn’t mean we should do nothing about these AI fuckers.

In the words of Cory Doctorow:

Web-scraping is good, actually.

Scraping against the wishes of the scraped is good, actually.

Scraping when the scrapee suffers as a result of your scraping is good, actually.

Scraping to train machine-learning models is good, actually.

Scraping to violate the public’s privacy is bad, actually.

Scraping to alienate creative workers’ labor is bad, actually.

We absolutely can have the benefits of scraping without letting AI companies destroy our jobs and our privacy. We just have to stop letting them define the debate.

It varies massivelly depending on the ML.

For example things like voice generation or object recognition can absolutelly be done with entirelly legit training datasets - literally pay a bunch of people to read some texts and you can train a voice generation engine with it and the work in object recognition is mainly tagging what’s in the images on top of a ton of easilly made images of things - a researcher can literally go around taking photos to make their dataset.

Image generation, on the other hand, not so much - you can only go so far with just plain photos a researcher can just go around and take on the street and they tend to relly a lot on artistic work of people who have never authorized the use of their work to train them, and LLMs clearly cannot be do without scrapping billions of pieces of actual work from billions of people.

Of course, what we tend to talk about here when we say “AI” is LLMs, which are IMHO the worst of the bunch.

I don’t care much about them harvesting all that data, what I do care about is that despite essentially feeding all human knowledge into LLMs they are still basically useless.

deleted by creator

My biggest gripe with AI is the same problem I have with anything crypto crypto: It’s out of control power consumption relative to the problem it solves or purpose it serves.

Don’t thrown all crypto under the bus. Only bitcoin and other proof of work protocols are power hungry. 2nd and 3rd generation crypto use mostly proof of stake and ZKrollups for security. Much more energy efficient.

Sure, but despite all the crypto bros assurances to the contrary, the only real-world applications for it is buying drugs, paying ransoms and getting scammed. Which means that any non-zero amount of energy is too much energy.

There are some use cases below, and none use proof of work.

https://hbr.org/2022/01/how-walmart-canada-uses-blockchain-to-solve-supply-chain-challenges

deleted by creator

Yes, most people buy, sit on, and then hopefully sell with a profit.

However, there are a large number of devs building useful things (supply chain, money transfer, digital identity). Most as good as, but not yet better than incumbent solutions.

My main challenge is the energy misconception. The cumulative power of the ethereum network runs on the energy equivalent of a single wind turbine.

And honestly even if it did serve it’s actual purpose, the cumulative power consumption would still be a point of debate.

Yeah, but at that point you’d have to consider it against how much power the traditional banking system uses.

Here we are using recycled bags, banning straws, putting explosive refrigerant in fridges and using led lights in everything

lol, sucker. none of that does shit and industry was already destroying the planet just fine before ai came along.

deleted by creator

yes. we are cancer. i live on as little as possible but i don’t delude myself into thinking my actions have any effect on the whole.

i spent nearly 20 years not using paper towels until i realized how pointless it was. now i throw my trash out the window. we’re all fucked. if we want to change things, there’s only one tool that will fix it. until people realize that, i really don’t fucking care any more.

deleted by creator

Idk if it’s the biggest problem, but it’s probably top three.

Other problems could include:

- Power usage

- Adding noise to our communication channels

- AGI fears if you buy that (I don’t personally)

Dead Internet theory has never been a bigger threat. I believe that’s the number one danger - endless quantities of advertising and spam shoved down our throats from every possible direction.

We’re pretty close to it, most videos on YouTube and websites that exist are purely just for some advertiser to pay that person for a review or recommendation

Could also put up:

- Massive collections of people are exploited in order to train various AI systems.

- Machine learning apps that create text or images from prompts are supposed to be supplementary but businesses are actively trying to replace their workers with this software.

- Machine learning image generation currently has diminishing returns for training as we pump exponentially more content into them.

- Machine learning text and image generated content self-poisons their generater’s sample pool, greatly diminishing the ability for these systems to learn from real world content.

There’s actually a much longer list if we expand to talking about other AI systems, like the robot systems we’re currently training to use in automatic warfare. There’s also the angle of these image and text generation systems being used for political manipulation and scams. There’s alot of terrible problems created from this tech.

Power usage

I’m generally a huge eco guy but on power usage particularly I view this largely as a government failure. We have had to incredible energy resources that the government has chosen not to implement or effectively dismantled.

It reminds me a lot of how Recycling has been pushed so hard into the general public instead of and government laws on plastic usage and waste disposal.

It’s always easier to wave your hands and blame “society” than the is to hold the actual wealthy and powerful accountable.

The problem with AI is that it pirates everyone’s work and then repackages it as its own and enriches the people that did not create the copywrited work.

I mean, it’s our work the result should belong to the people.

This is where “universal basic income” comes into play

More broadly, I would expect UBI to trigger a golden age of invention and artistic creation because a lot of people would love to spend their time just creating new stuff without the need to monetise it but can’t under the current system, and even if a lot of that would be shit or crazily niche, the more people doing it and the freer they are to do it, the more really special and amazing stuff will be created.

Unfortunately one will not lead to the other.

It will lead to the plot of Elysium.

That’s what all artists have done since the dawn of ages.

Truer words have never been said.

AI has a vibrant open source scene and is definitely not owned by a few people.

A lot of the data to train it is only owned by a few people though. It is record companies and publishing houses winning their lawsuits that will lead to dystopia. It’s a shame to see so many actually cheering them on.

I’d say the biggest problem with AI is that it’s being treated as a tool to displace workers, but there is no system in place to make sure that that “value” (I’m not convinced commercial AI has done anything valuable) created by AI is redistributed to the workers that it has displaced.

Welcome to every technological advancement ever applied to the workforce

The system in place is “open weights” models. These AI companies don’t have a huge head start on the publicly available software, and if the value is there for a corporation, most any savvy solo engineer can slap together something similar.

Two intrinsic problems with the current implementations of AI is that they are insanely resource-intensive and require huge training sets. Neither of those is directly a problem of ownership or control, though both favor larger players with more money.

If gigantic amounts of capital weren’t available, then the focus would be on improving the models so they don’t need GPU farms running off nuclear reactors plus the sum total of all posts on the Internet ever.

Same as always. There is no technology capitalism can’t corrupt

The biggest problem with AI is the damage it’s doing to human culture.

Either the article editing was horrible, or Eno is wildly uniformed about the world. Creation of AIs is NOT the same as social media. You can’t blame a hammer for some evil person using it to hit someone in the head, and there is more to ‘hammers’ than just assaulting people.

Eno does strike me as the kind of person who could use AI effectively as a tool for making music. I don’t think he’s team “just generate music with a single prompt and dump it onto YouTube” (AI has ruined study lo fi channels) - the stuff at the end about distortion is what he’s interested in experimenting with.

There is a possibility for something interesting and cool there (I think about how Chuck Pearson’s eccojams is just like short loops of random songs repeated in different ways, but it’s an absolutely revolutionary album) even if in effect all that’s going to happen is music execs thinking they can replace songwriters and musicians with “hey siri, generate a pop song with a catchy chorus” while talentless hacks inundate YouTube and bandcamp with shit.

Yeah, Eno actually has made a variety of albums and art installations using generative simple AI for musical decisions, although I don’t think he does any advanced programming himself. That’s why it’s really odd to see comments in an article that imply he is really uninformed about AI…he was pioneering generative music 20-30 years ago.

I’ve come to realize that there is a huge amount of misinformation about AI these days, and the issue is compounded by there being lots of clumsy, bad early AI works in various art fields, web journalism etc. I’m trying to cut back on discussing AI for these reasons, although as an AI enthusiast, it’s hard to keep quiet about it sometimes.

Eno is more a traditional algorist than “AI” (by which people generally mean neural networks)

I could see him using neural networks to generate and intentionally pick and loop short bits with weird anomalies or glitchy sounds. Thats the route I’d like AI in music to go, so maybe that’s what I’m reading in, but it fits Eno’s vibe and philosophy.

AI as a tool not to replace other forms of music, but doing things like training it on contrasting music genres or self made bits or otherwise creatively breaking and reconstructing the artwork.

John Cage was all about ‘stochastic’ music - composing based on what he divined from the I Ching. There are people who have been kicking around ideas like this for longer than the AI bubble has been around - the big problem will be digging out the good stuff when the people typing “generate a three hour vapor wave playlist” can upload ten videos a day…

Sure. I worked in the game industry and sometimes AI can mean ‘pick a random number if X occurs’ or something equally simple, so I’m just used to the term used a few different ways.

Totally fair

And those people want to use AI to extract money and to lay off people in order to make more money.

That’s “guns don’t kill people” logic.

Yeah, the AI absolutely is a problem. For those reasons along with it being wrong a lot of the time as well as the ridiculous energy consumption.

Yeah, the AI absolutely is a problem.

AI is noto a problemi by itself, the problemi is that most of the people who make decisions in the workplace about these things do not understand what they are talking about and even less what something is capable of.

My impression is that AI now is what blockchain was some years ago, the solution to every problemi,which was of course false.

The real issues are capitalism and the lack of green energy.

If the arts where well funded, if people where given healthcare and UBI, if we had, at the very least, switched to nuclear like we should’ve decades ago, we wouldn’t be here.

The issue isn’t a piece of software.

No?

Anyone can run an AI even on the weakest hardware there are plenty of small open models for this.

Training an AI requires very strong hardware, however this is not an impossible hurdle as the models on hugging face show.

But the people with the money for the hardware are the ones training it to put more money in their pockets. That’s mostly what it’s being trained to do: make rich people richer.

This completely ignores all the endless (open) academic work going on in the AI space. Loads of universities have AI data centers now and are doing great research that is being published out in the open for anyone to use and duplicate.

I’ve downloaded several academic models and all commercial models and AI tools are based on all that public research.

I run AI models locally on my PC and you can too.

That is entirely true and one of my favorite things about it. I just wish there was a way to nurture more of that and less of the, “Hi, I’m Alvin and my job is to make your Fortune-500 company even more profitable…the key is to pay people less!” type of AI.

But you can make this argument for anything that is used to make rich people richer. Even something as basic as pen and paper is used everyday to make rich people richer.

Why attack the technology if its the rich people you are against and not the technology itself.

It’s not even the people; it’s their actions. If we could figure out how to regulate its use so its profit-generation capacity doesn’t build on itself exponentially at the expense of the fair treatment of others and instead actively proliferate the models that help people, I’m all for it, for the record.

We shouldn’t do anything ever because poors

Yah, I’m an AI researcher and with the weights released for deep seek anybody can run an enterprise level AI assistant. To run the full model natively, it does require $100k in GPUs, but if one had that hardware it could easily be fine-tuned with something like LoRA for almost any application. Then that model can be distilled and quantized to run on gaming GPUs.

It’s really not that big of a barrier. Yes, $100k in hardware is, but from a non-profit entity perspective that is peanuts.

Also adding a vision encoder for images to deep seek would not be theoretically that difficult for the same reason. In fact, I’m working on research right now that finds GPT4o and o1 have similar vision capabilities, implying it’s the same first layer vision encoder and then textual chain of thought tokens are read by subsequent layers. (This is a very recent insight as of last week by my team, so if anyone can disprove that, I would be very interested to know!)

Would you say your research is evidence that the o1 model was built using data/algorithms taken from OpenAI via industrial espionage (like Sam Altman is purporting without evidence)? Or is it just likely that they came upon the same logical solution?

Not that it matters, of course! Just curious.

Well, OpenAI has clearly scraped everything that is scrap-able on the internet. Copyrights be damned. I haven’t actually used Deep seek very much to make a strong analysis, but I suspect Sam is just mad they got beat at their own game.

The real innovation that isn’t commonly talked about is the invention of Multihead Latent Attention (MLA), which is what drives the dramatic performance increases in both memory (59x) and computation (6x) efficiency. It’s an absolute game changer and I’m surprised OpenAI has released their own MLA model yet.

While on the subject of stealing data, I have been of the strong opinion that there is no such thing as copyright when it comes to training data. Humans learn by example and all works are derivative of those that came before, at least to some degree. This, if humans can’t be accused of using copyrighted text to learn how to write, then AI shouldn’t either. Just my hot take that I know is controversial outside of academic circles.

deleted by creator