Ouch.

they really should have shared the entire token context, I get hating on llms, but context matters.

That’s less “seeking help on homework” than “having it do your work for you”.

But it’s incredibly bad.

At least it’s a better headline than the last article I read about it. That one said something along the lines of “during back-and-forth conversation about challenges and solutions for aging adults…”, like we all couldn’t see literal questions being pasted one by one

A simple “wrong” would have just done fine

On the original thread of questions, it went on for a long time and had multiple questions about psychological, emotional, and physical abuse.

LLMs get more and more off the rails as their context gets longer (longer convo), most folks have prolly at this point noticed every now and then a long running convo gets a little… schizophrenic feeling as it drags on.

The combination of a very long convo with a lot of tokens, and its subject being that of discussing and defining types of abuse, and I can see how eventually the LLM will generate a response like that randomly when it goes off the rails.

This happened to me and my friends this summer. The three of us were talking about AI technology and one friend who is an engineer wanted to demonstrate all this so he turned on ChatGPT on his phone and we started asking random questions. The three of us were just having fun and taking turns asking about food, birds, geology, houses, construction, math equations, medicine, the meaning of life, and a bunch of other silly things … after about half an hour it went off the rails and started giving bizarre answers that tried to create responses that tried to combine everything we had been asking about up to that point. Completely crazy responses that tried to give a meaning of life explanation that included birds, peanuts and how a bicycle works. We wanted to record the responses because they were so off the wall but by the time we started recording the audio, we were disconnected, the conversation reset and everything went back to normal.

There is a new conversational space beyond which is known to man. It is a space as vast as your mom and as timeless as corporate greed. It is the middle ground between light and shadow, between the observed and deducted, and it lies between the pit of man’s assumptions and the summit of his hubris. This is the dimension of hallucination. It is an area which we call, “The Twilight Zone.”

Your comment went off the rails in your second paragraph so you might want to take a Turing test.

wat?

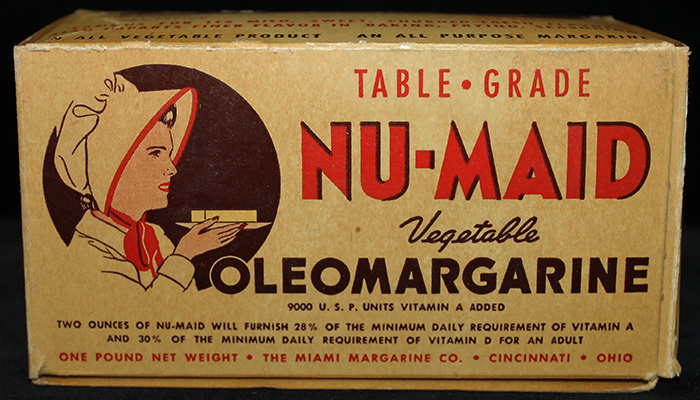

I guess that’s what happens when the AI is trained on Reddit data.

“This.”

So much this

I see what you did there

I did Nazi that coming!!!

Thanks for the gold kind stranger!

Something something hell in a cell with shitty watercolour announcers table

Damn lochness monster

How bad at doing homework is she that the ai had a mental breakdown trying to teach her!?

The easy part is making a program that can pretend to be human. The hard part is getting it to not be an asshole.

How do you pretend to be human, without being an asshole? Isn’t that the essence of humankind?

Need to base AI off of a Canadian. Worked for the pentaverate AI.