Hi all!

I will soon acquire a pretty beefy unit compared to my current setup (3 node server with each 16C, 512G RAM and 32T Storage).

Currently I run TrueNAS and Proxmox on bare metal and most of my storage is made available to apps via SSHFS or NFS.

I recently started looking for “modern” distributed filesystems and found some interesting S3-like/compatible projects.

To name a few:

- MinIO

- SeaweedFS

- Garage

- GlusterFS

I like the idea of abstracting the filesystem to allow me to move data around, play with redundancy and balancing, etc.

My most important services are:

- Plex (Media management/sharing)

- Stash (Like Plex 🙃)

- Nextcloud

- Caddy with Adguard Home and Unbound DNS

- Most of the Arr suite

- Git, Wiki, File/Link sharing services

As you can see, a lot of download/streaming/torrenting of files accross services. Smaller services are on a Docker VM on Proxmox.

Currently the setup is messy due to the organic evolution of my setup, but since I will upgrade on brand new metal, I was looking for suggestions on the pillars.

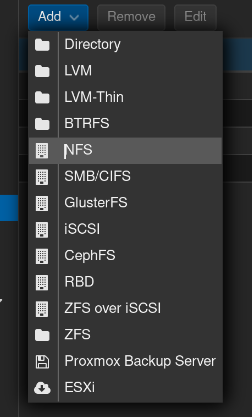

So far, I am considering installing a Proxmox cluster with the 3 nodes and host VMs for the heavy stuff and a Docker VM.

How do you see the file storage portion? Should I try a full/partial plunge info S3-compatible object storage? What architecture/tech would be interesting to experiment with?

Or should I stick with tried-and-true, boring solutions like NFS Shares?

Thank you for your suggestions!

“Boring”? I’d be more interested in what works without causing problems. NFS is bulletproof.

NFS is bulletproof.

For it to be bulletproof, it would help if it came with security built in. Kerberos is a complex mess.

Yeah, I’ve ended up setting up VLANS in order to not deal with encryption

You are 100% right, I meant for the homelab as a whole. I do it for self-hosting purposes, but the journey is a hobby of mine.

So exploring more experimental technologies would be a plus for me.

Most of the things you listed require some very specific constraints to even work, let alone work well. If you’re working with just a few machines, no storage array or high bandwidth networking, I’d just stick with NFS.

As a recently former hpc/supercomputer dork nfs scales really well. All this talk of encryption etc is weird you normally just do that at the link layer if you’re worried about security between systems. That and v4 to reduce some metadata chattiness and gtg. I’ve tried scaling ceph and s3 for latency on 100/200g links. By far NFS is easier than all the rest to scale. For a homelab? NFS and call it a day, all the clustering file systems will make you do a lot more work than just throwing hard into your nfs mount options and letting clients block io while you reboot. Which for home is probably easiest.

I agree as well. No reason to not use it. If there were better ways to build an alternative, one would exist.

What’s wrong with NFS? It is performant and simple.

By default, unencrypted, and unauthenticated, and permissions rely on IDs the client can fake.

May or may not be a problem in practice, one should think about their personal threat model.

Mine are read only and unauthenticated because they’re just media files, but I did add unneeded encryption via ktls because it wasn’t too hard to add (I already had a valid certificate to reuse)

NFS is good for hypervisor level storage. If someone compromises the host system you are in trouble.

If someone compromises the host system you are in trouble.

Not only the host. You have to trust every client to behave, as @forbiddenlake already mentioned, NFS relies on IDs that clients can easily fake to pretend they are someone else. Without rolling out all the Kerberos stuff, there really is no security when it comes to NFS.

You misunderstand. The hypervisor is the client. Stuff higher in the stack only sees raw storage. (By hypervisors I also mean docker and kubernetes) From a security perspective you just set an IP allow list

Sure, if you have exactly one client that can access the server and you can ensure physical security of the actual network, I suppose it is fine. Still, those are some severe limitations and show how limited the ancient NFS protocol is, even in version 4.

NFS is fine if you can lock it down at the network level, but otherwise it’s Not For Security.

NFS + Kerberos?

But everything I read about NFS and so on: You deploy it on a dedicated storage LAN and not in your usual networking LAN.

I tried it once. NFSv4 isn’t simple like NFSv3 is. Fewer systems support it too.

Gotta agree. Even better if backed by zfs.

It is a pain to figure out how to give everyone the same user id. I only have a couple computers at home. I’ve never figured out how to make LDAP work (including laptops which might not have network access when I’m on the road). Worse some systems start with userid 1000, some 1001. NFS is a real mess - but I use it because I haven’t found anything better for unix.

I’d only use sshfs if there’s no other alternative. Like if you had to copy over a slow internet link and sync wasn’t available.

NFS is fine for local network filesystems. I use it everywhere and it’s great. Learn to use autos and NFS is just automatic everywhere you need it.

*autofs

sshfs is somewhat unmaintained, only “high-impact issues” are being addressed https://github.com/libfuse/sshfs

I would go for NFS.

And if you need to mount a directory over SSH, I can recommend rclone and its mount subcommand.

But NFS has mediocre snapshotting capabilities (unless his setup also includes >10g nics)

I assume you are referring to Filesystem Snapshotting? For what reason do you want to do that on the client and not on the FS host?

I have my NFS storage mounted via 2.5G and use qcow2 disks. It is slow to snapshot…

Maybe I understand your question wrong?

If i understand you correctly, your Server is accessing the VM disk images via a NFS share?

That does not sound efficient at all.

No other easy option I figured out.

Didnt manage to understand iSCSI in the time I was patient with it and was desperate to finish the project and use my stuff.

Thus NFS.

Your workload just won’t see much difference with any of them, so take your pick.

NFS is old, but if you add security constraints, it works really well. If you want to tune for bandwidth, try iSCSI , bonus points if you get zfs-over-iSCSI working with tuned block size. This last one is blazing fast if you have zfs at each and you do Zfs snapshots.

Beyond that, you’re getting into very tuned SAN things, which people build their careers on, its a real rabbit hole.

NFS with security does harm performance. For raw throughput it is best to use no encryption. Instead, use physical security.

I don’t know what you’re on about, I’m talking about segregating with vlans and firewall.

If you’re encrypting your San connection, your architecture is wrong.

That’s what I though you were saying

Oh, OK. I should have elaborated.

Yes, agreed. It’s so difficult to secure NFS that it’s best to treat it like a local connection and just lock it right down, physically and logically.

When i can, I use iscsi, but tuned NFS is almost as fast. I have a much higher workload than op, and i still am unable to bottleneck.

Have you ever used NFS in a larger production environment? Many companies coming from VMware have expensive SAN systems and Proxmox doesn’t have great support for iscsi

Yes, i have. Same security principles in 2005 as today.

Proxmox iscsi support is fine.

It really isn’t.

You can’t automatically create new disks with the create new VM wizard.

Also I hope you aren’t using the same security principals as 2005. The landscape has evolved immensity.

Gluster is

shitreally bad, garage and minio are great. If you want something tested and insanely powerful go with ceph, it has everything. Garage is fine for smaller installations, and it’s very new and not that stable yet.Ceph isn’t something you want to jump into without research

Darn, Garage is the only one that I successfully deployed a test cluster.

I will dive more carefully into Ceph, the documentation is a bit heavy, but if the effort is worth it…

Thanks.

I had great experience with garage at first, but it crapped itself after a month, it was like half a year ago and the problem was fixed, still left me with a bit of anxiety.

You need to know what you are doing with Ceph. It can scale to Exobyte levels but you need to do it right.

I’m using ceph on my proxmox cluster but only for the server data, all my jellyfin media goes into a separate NAS using NFS as it doesn’t really need the high availability and everything else that comes with ceph.

It’s been working great, You can set everything up through the Proxmox GUI and it’ll show up as any other storage for the VMs. You need enterprise grade NVMEs for it though or it’ll chew through them in no time. Also a separate network connection for ceph traffic if you’re moving a lot of data.

Very happy with this setup.

I think you will need to have a mix, not everything is S3 compatible.

But I also like S3 quite a lot.

I think I am on the same page.

I will provably keep Plex/Stash out of S3, but Nextckoud could be worth it? (1TB with lots of documents and medias).

How would you go for Plex/Stash storage?

Keeping it as a LVM in Proxmox?

I use Ceph/CephFS myself for my own 671TiB array (382TiB raw used, 252TiB-ish data stored) – I find it a much more robust and better architected solution than Gluster. It supports distributed block devices (RBD), filesystems (CephFS), and object storage (RGW). NFS is pretty solid though for basic remote mounting filesystems.

I’ve used MinIO as the object store on both Lemmy and Mastodon, and in retrospect I wonder why. Unless you have clustered servers and a lot of data to move it’s really just adding complexity for the sake of complexity. I find that the bigger gains come from things like creating bonded network channels and sorting out a good balance in the disk layout to keep your I/O in check.

I preach this to people everywhere I go and seldom do they listen. There’s no reason for object storage for a non-enterprise environment. Using it in homelabs is just…mostly insane…

Generally yes, but it can be useful as a learning thing. A lot of my homelab use is for purposes of practicing with different techs in a setting where if it melts down it’s just your stuff. At work they tend to take offense of you break prod.

Fam, the modern alternative to SSHFS is literally SSHFS.

All that said, if your use case is mostly downloading and uploading files but not moving them between remotes, then overlaying webdav on whatever you feel comfy on (and that’s already what eg.: Nexctloud does, IIRC) should serve well.

What are you hosting the storage on? Are you providing this storage to apps, containers, VMs, proxmox, your desktop/laptop/phone?

Currently, most of the data in on a bare-metal TrueNAS.

Since the nodes will come with each 32TB of storage, this would be plenty for the foreseeable future (currently only using 20TB across everything).

The data should be available to Proxmox VMs (for their disk images) and selfhosted apps (mainly Nextcloud and Arr apps).

A bonus would be to have a quick/easy way to “mount” some volume to a Linux Desktop to do some file management.

Proxmox supports ceph natively, and you can mount it from a workstation too, I think. I assume it operates in a shared mode, unlike iscsi.

If the apps are running on a VM in proxmox, then the underlying storage doesn’t matter to them.

NFS is probably the most mature option, but I don’t know if proxmox officially supports it.

Proxmox does support NFS

But let’s say that I would like to decommission my TrueNAS and thus having the storage exclusively on the 3-node server, how would I interlay Proxmox+Storage?

(Much appreciated btw)

I think the best option for distributed storage is ceph.

At least something that’s distributed and fail safe (assuming OP targets this goal).

And if proxmox doesnt support it natively, someone could probably still config it local on the underlying debian OS.

NFS gives me the best performance. I’ve tried GlusterFS (not at home, for work), and it was kind of a pain to set up and maintain.