Reject proprietary LLMs, tell people to “just llama it”

Ugh. Don’t get me started.

Most people don’t understand that the only thing it does is ‘put words together that usually go together’. It doesn’t know if something is right or wrong, just if it ‘sounds right’.

Now, if you throw in enough data, it’ll kinda sorta make sense with what it writes. But as soon as you try to verify the things it writes, it falls apart.

I once asked it to write a small article with a bit of history about my city and five interesting things to visit. In the history bit, it confused two people with similar names who lived 200 years apart. In the ‘things to visit’, it listed two museums by name that are hundreds of miles away. It invented another museum that does not exist. It also happily tells you to visit our Olympic stadium. While we do have a stadium, I can assure you we never hosted the Olympics. I’d remember that, as i’m older than said stadium.

The scary bit is: what it wrote was lovely. If you read it, you’d want to visit for sure. You’d have no clue that it was wholly wrong, because it sounds so confident.

AI has its uses. I’ve used it to rewrite a text that I already had and it does fine with tasks like that. Because you give it the correct info to work with.

Use the tool appropriately and it’s handy. Use it inappropriately and it’s a fucking menace to society.

I gave it a math problem to illustrate this and it got it wrong

If it can’t do that imagine adding nuance

Well, math is not really a language problem, so it’s understandable LLMs struggle with it more.

But it means it’s not “thinking” as the public perceives ai

Hmm, yeah, AI never really did think. I can’t argue with that.

It’s really strange now if I mentally zoom out a bit, that we have machines that are better at languange based reasoning than logic based (like math or coding).

deleted by creator

And then google to confirm the gpt answer isn’t total nonsense

I’ve had people tell me “Of course, I’ll verify the info if it’s important”, which implies that if the question isn’t important, they’ll just accept whatever ChatGPT gives them. They don’t care whether the answer is correct or not; they just want an answer.

That is a valid tactic for programming or how-to questions, provided you know not to unthinkingly drink bleach if it says to.

Have they? Don’t think I’ve heard that once and I work with people who use chat gpt themselves

I’m with you. Never heard that. Never.

Meanwhile Google search results:

- AI summary

- 2x “sponsored” result

- AI copy of Stackoverflow

- AI copy of Geeks4Geeks

- Geeks4Geeks (with AI article)

- the thing you actually searched for

- AI copy of AI copy of stackoverflow

Should we put bets on how long until chatgpt responds to anything with:

Great question, before i give you a response, let me show you this great video for a new product you’ll definitely want to check out!

Nah, it’ll be more subtle than that. Just like Brawno is full of the electrolytes plants crave, responses will be full of subtle product and brand references marketers crave. And A/B studies performed at massive scales in real-time on unwitting users and evaluated with other AIs will help them zero in on the most effective way to pepper those in for each personality type it can differentiate.

“Great question, before i give you a response, let me introduce you to raid shadow legends!”

Less than a year.

I say 6 months.

I give him 11 minutes.

And my axe!

We have new feature, use it!

No, its broken and stupid, I prefer old feature.

… Fine!

breaks old feature even harder

I’ve used Google since 2004. I stopped using it this year because as the parent comment points out, it’s all marketing and AI. I like Qwant but it’s not perfect but it functions like a previous version of Google.

I have tried a few replacements for Google but I’ve yet to find anything remotely as effective for searches about things close to me. Like if I’m looking for a restaurant near me, kagi, startpage, and DDG are not good. Is qwant good for a use case like that? Haven’t heard about it before.

I’ve had some success but it goes off of your ISPs server location so for me it’s not very useful.

yeah, but at least we can vet that shit better that the unsourced and hallucinated drivel provided by ChatGPT

Even adding, “Reddit” after a search only brings up posts from 7 years ago.

The irony is that Gemini Pro is actually better than ChatGPT (which is not saying a ton, as OpenAI have completely stagnated and even some small open models are better now), but whatever they use for search is beyond horrible.

just call it cgpt for short

Computer Generated Partial Truths

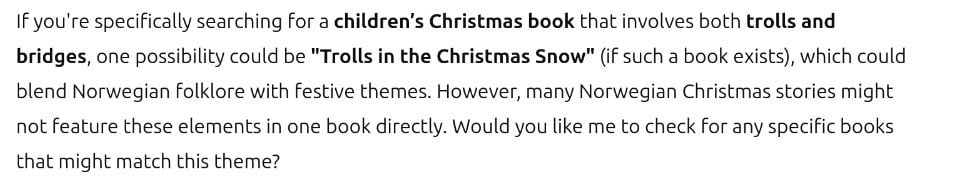

Last night, we tried to use chatGPT to identify a book that my wife remembers from her childhood.

It didn’t find the book, but instead gave us a title for a theoretical book that could be written that would match her description.

At least it said if it exists, instead of telling you when it was written (hallucinating)

Maybe it’s trying to motivate me to become a writer.

Maybe it’s trying to motivate me to become a writer.

Same happens every time I’ve tried to use it for search. Will be radioactive for this type of thing until someone figures that out. Quite frustrating, if they spent as much time on determining the difference between when a user wants objective information with citations as they do determining if the response breaks content guidelines, we might actually have something useful. Instead, we get AI slop.

How long until ChatGPT starts responding “It’s been generally agreed that the answer to your question is to just ask ChatGPT”?

Did you chatgpt this title?

“Did you ChatGPT it?”

I wondered what language this would be an unintended insult in.

Then I chuckled when I ironically realized, it’s offensive in English, lmao.

Did you cat I farted it?

I say, “Just search it.” Not interested in being free advertising for Google.

GPTs natural language processing is extremely helpful for simple questions that have historically been difficult to Google because they aren’t a concise concept.

The type of thing that is easy to ask but hard to create a search query for like tip of my tongue questions.

Google used to be amazing at this. You could literally search “who dat guy dat paint dem melty clocks” and get the right answer immediately.

I mean tbf you can still search “who DAT guy” and it will give you Salvador Dali in one of those boxes that show up before the search results.

This is why so much research has been going into AI lately. The trend is already to not read articles or source material and base opinions off click bait headlines, so naturally relying on AI summaries and search results will soon come next. People will start to assume any generated response from a ‘trusted search ai’ is true, so there is a ton of value in getting an AI to give truthful and correct responses all of the time, and then be able to edit certain responses to inject whatever truth you want. Then you effectively control what truth is, and be able to selectively edit public opinion by manipulating what people are told is true. Right now we’re also being trained that AI may make things up and not be totally accurate- which gives those running the services a plausible excuse if caught manipulating responses.

I am not looking forward to arguing facts with people citing AI responses as their source for truth. I already know if I present source material contradicting them, they lack the ability to actually read and absorb the material.

“Let’s ask MULTIVAC!”

Chatgpt is this real

This is entirely Google’s fault.

Google intentionally made search worse, but even if they want to make it better again, there’s very little they can do. The web itself is extremely low signal:noise, and it’s almost impossible to write an algorithm that lets the signal shine through (while also giving any search results back)

It would still be better if quality search (not extracting more and more money in the short term) was their goal.