Via @rodhilton@mastodon.social

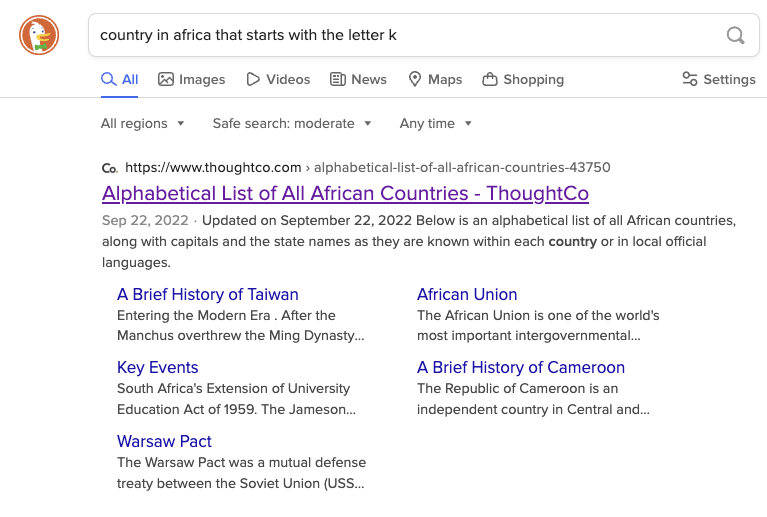

Right now if you search for “country in Africa that starts with the letter K”:

-

DuckDuckGo will link to an alphabetical list of countries in Africa which includes Kenya.

-

Google, as the first hit, links to a ChatGPT transcript where it claims that there are none, and summarizes to say the same.

This is because ChatGPT at some point ingested this popular joke:

“There are no countries in Africa that start with K.” “What about Kenya?” “Kenya suck deez nuts?”

Removed by mod

Would you happen to have some examples? I don’t disagree that LLMs have more of a use case and application than the cryptoNFT misapplications of blockchain, but I’m honestly not familiar with where they’ve solved real world problems (and not just demonstrated some research breakthroughs, which while impressive in their own respect do not always extend to immediate applications).

They do shitty homework?

Removed by mod

Oh, no, I’ve heard it, I’m just skeptical of their accuracy and reliability, and that skepticism has been borne out by the numerous reports of glitching (“hallucinations” as they insist on calling them, in furtherance of their inappropriate personification of the technology). Moreover, I’ve found their mass theft of others’ work to further call into question the creators’ trustworthiness, which has only been compounded by their overselling of their technology’s capabilities while simultaneously suggesting it’s just untenable to log & cite all the sources that they push into it.

It can supposedly do all you describe, but it can’t effectively credit its sources? It can tutor but it can’t even keep basic information straight? Please. It’s impressive technology, but it’s being overblown because the markets favor exaggeration to facts, at least as long as people can be kept enamored with the fantasy they spin.

Removed by mod